Your AI strategy is only as strong as your operating model

AI stalls when layered onto weak operating models. Real value starts when leaders redesign ownership, governance, and work around it.

AI is forcing a harder question than most leadership teams want to answer: what has to change in the business for this technology to deliver real value.

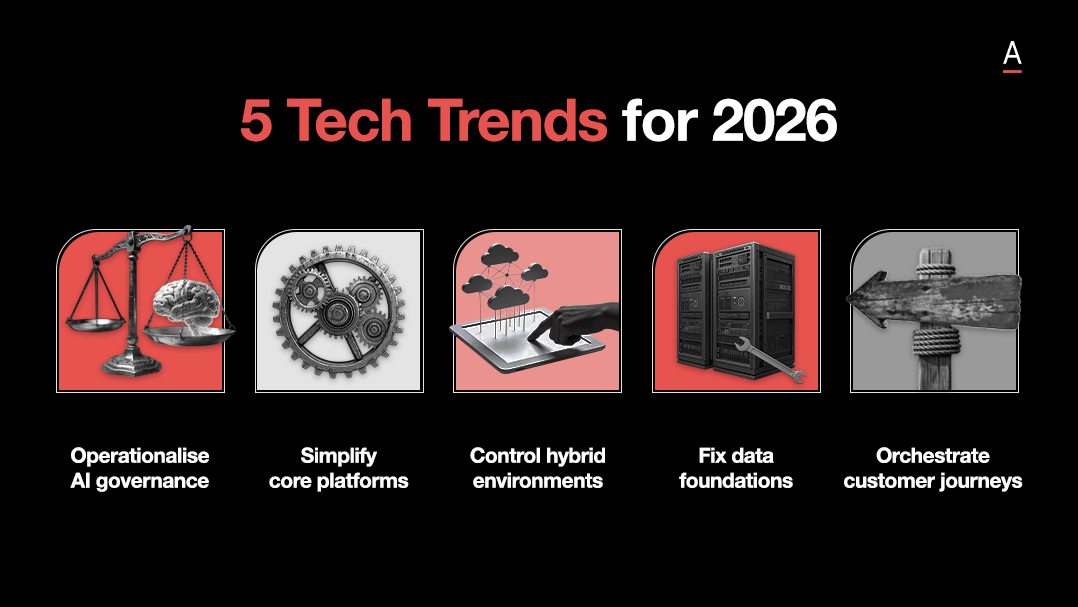

At ADAPT’s 5th Data & AI Edge in Sydney, the strongest thread across the event was that AI keeps stalling when organisations layer it onto old structures, fragmented ownership, and workflows built for a different era.

That is why this is no longer a technology discussion or a future facing innovation agenda.

It is an enterprise redesign issue sitting squarely with the executive team.

With 92% saying AI success is tied to their career progression, the pressure is already here.

Yet many organisations are still treating AI as something to deploy rather than something that should reshape how decisions are made, how work moves, and how accountability is set.

That is why so many AI programs generate motion without changing performance.

The leaders who move first from tool adoption to operating model redesign will be the ones who turn AI from activity into advantage.

AI fails when it is bolted onto the business instead of built into it

Most AI strategies stall because they treat AI as an added capability rather than a trigger for redesign.

New tools are introduced, use cases are funded, and pilots are launched, but the business underneath stays largely intact.

The same silos remain. The same handoffs slow decisions down.

The same workflows are carried forward with smarter interfaces attached.

That is how AI gets trapped at the edge of the enterprise instead of changing how the enterprise works.

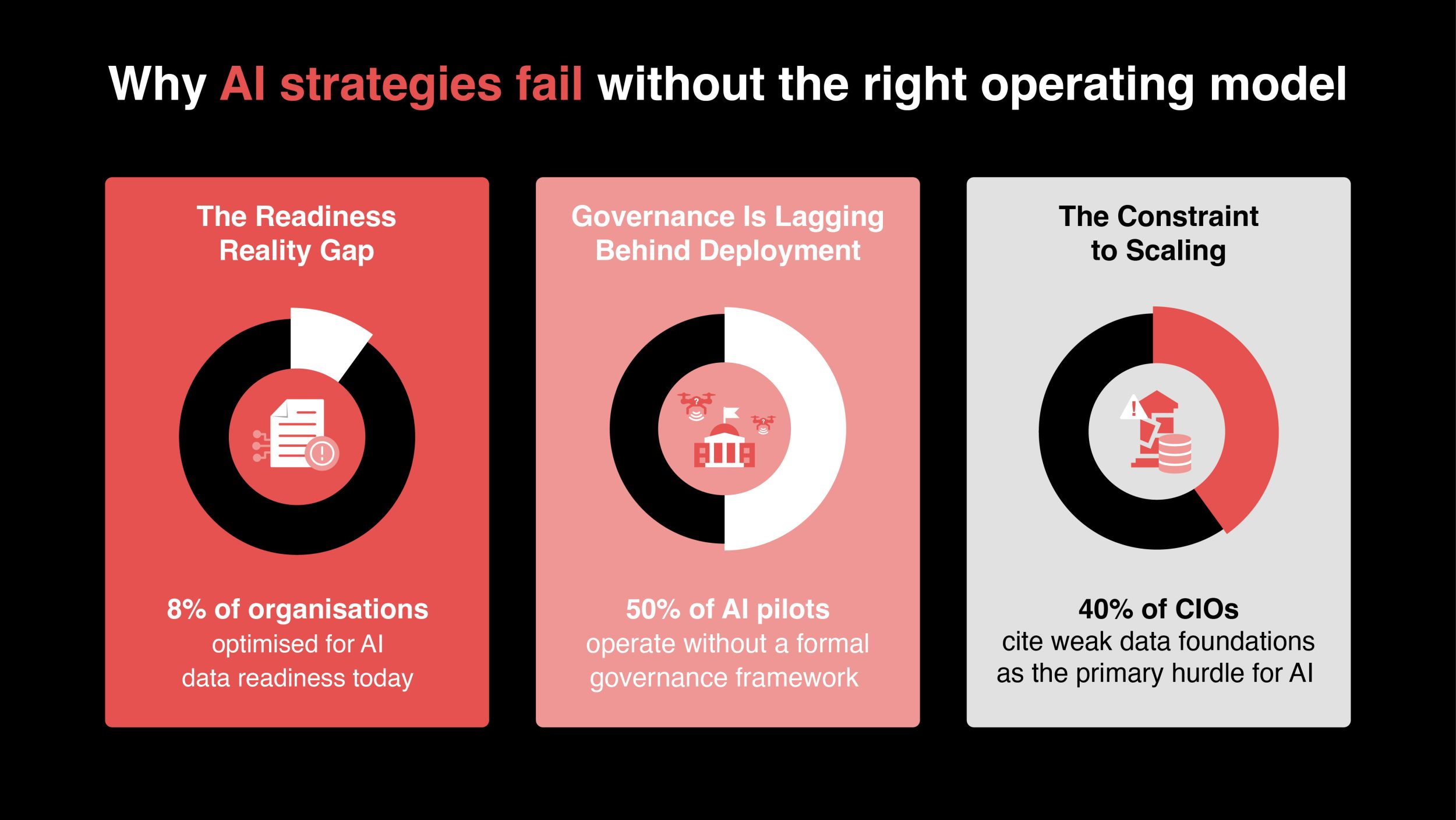

Only 8% say their organisation is optimised for AI data readiness.

And the main constraint is not interest but organisational readiness.

Many businesses are trying to scale AI on top of unresolved issues in data quality, ownership, architecture, and process design.

That pressure sharpens further when 40% of Aussie CIOs say data foundations are the #1 constraint to scaling agentic AI.

When those foundations are weak, AI becomes an overlay.

It may improve a task or accelerate a step, but it rarely changes enterprise performance in a durable way.

The sharper view is that AI forces leaders to decide what kind of organisation they are trying to build.

Katarina Dulanovic, General Manager Data Office at Allianz Australia and Global Group CDO Advisor for Data and AI at Allianz, pushed straight past the usual platform debate and focused on the harder question: how should the operating model change when AI becomes part of day to day business execution.

Her argument was that leaders are still spending too much energy debating tools and too little energy redesigning the value chain.

If AI is going to reshape claims, customer service, onboarding, or decision making, then the roles and workflows behind those activities have to change as well.

Otherwise the organisation is simply recreating the old model with new technology.

Her point about claims assessors made that practical. Once AI changes how work is done, the role itself has to change with it.

That redesign cannot wait until the business case is approved. By then, the organisation is already behind.

That same idea came through from John Roese, Global Chief Technology Officer and Chief AI Officer at Dell Technologies, but with harder commercial proof.

He described inheriting 900 AI projects that generated motion without outcomes.

Dell reset around a clear financial north star, focused on core business functions, reworked processes first, then applied AI to targeted sources of friction.

His sequence, simplify, standardise, then automate, is useful because it captures the order many organisations still get wrong.

AI starts producing enterprise value when the business stops treating it as a separate stream of experimentation and starts using it to redesign how work gets done.

Dell’s results were powerful because they came after structural focus, not before it.

The common thread is hard to ignore.

AI will keep underperforming in organisations that try to insert it into existing structures without questioning how those structures should evolve.

Local productivity gains are possible under that model. Enterprise change is not.

Leaders who want more than isolated wins need to decide where AI should sit in the value chain, where people still add judgment, and where the business itself has to operate differently.

Governance only works when ownership is shared across the enterprise

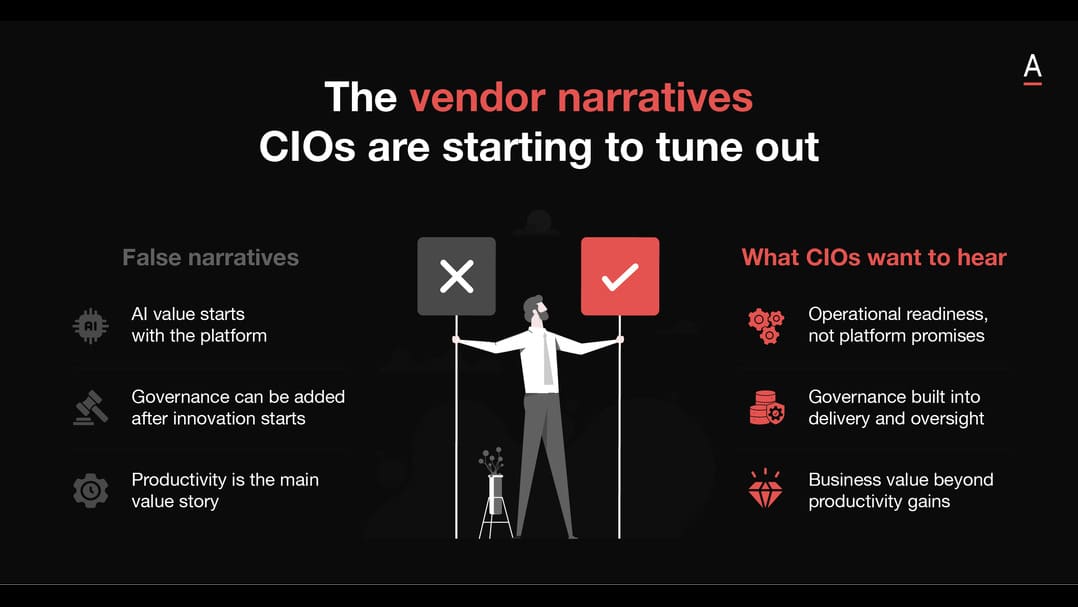

Governance becomes fragile when deployment accelerates faster than accountability, oversight, and decision rights can keep up.

With 50% saying their AI pilots and deployments are not covered by a formal governance framework, the gap is no longer theoretical.

Organisations are already moving faster than their control structures can support.

The weakness is even more exposed at the top, with only 7% of Aussie CIOs saying their organisation has enterprise wide AI governance with board involvement.

That matters because governance maturity is defined by whether leadership has embedded accountability, oversight, and decision rights at the level where AI is actually being scaled.

The deeper issue is that many organisations still talk about governance as if it belongs to IT, data, legal, or risk alone.

Once AI touches live decisions, customer interactions, employee tools, or operational judgment, governance becomes an enterprise design issue.

It sits in decision rights, process controls, shared accountability, and the quality of collaboration between functions.

If those things are weak, governance remains abstract, even when the policy documents look complete.

Mike Lau, CDAO at ADHA, framed this clearly in the panel discussion.

He argued that a data-driven operating model is about the whole organisation, people, process, technology, and human judgment, rather than autonomous decision making alone.

This reframes governance as part of value creation.

Human oversight is not friction added to the system. In many operating environments, it is what makes the output usable, contextual, and trustworthy.

AI can surface insights, accelerate analysis, and expand reach, but there are still decisions where context and judgment remain essential.

Samrat Seal, Head of Transformation and Governance, AI and Cyber at Kmart Group, pushed that argument back to the basics. Before leaders talk about scale, they need to know whether the foundations are even in place.

Do they understand lineage across structured and unstructured data? Are stewardship and ownership clear? Is there a secure and repeatable way to build and deploy?

His point was that fragmented AI efforts can still deliver ROI in pockets, but they tend to fail when the organisation tries to scale them.

That is why governance and data foundations have to be treated as operating requirements rather than cleanup work sitting outside the main agenda.

Jen French, GM, AI Acceleration at Commonwealth Bank of Australia, showed what stronger governance looks like in practice.

At CBA, the model is built around group level frameworks, clear policies, practical toolkits, and cross functional involvement from the start.

Legal, risk, compliance, technical teams, and business leaders all have a role in shaping how AI is deployed and used.

This shifts governance from a review gate into a delivery discipline.

It gives the organisation a way to move with more speed and more confidence at the same time.

Pooyan Asgari, Chief Data Officer at Domain Group, raised the stakes further by linking multimodal AI, agentic systems, and explainability.

His presentation made the point that more capable models do not reduce the need for governance.

They raise the bar for it. If an organisation is using richer data, more autonomous reasoning, and more consequential outputs, then explainability becomes commercially important, not merely ethically desirable.

A model that cannot explain how it reached an outcome may still be technically impressive, but it will struggle to survive legal, regulatory, or customer scrutiny.

Governance, in that sense, is becoming one of the main conditions for operationalising advanced AI at all.

The organisations that scale AI well will be the ones that stop treating governance as a compliance wrapper and start treating it as a capability.

Strong governance is what allows faster adoption, wider trust, and better decisions across the business.

Weak governance leaves organisations stuck in a cycle of pilots, pauses, and preventable rework.

AI value becomes real when leaders redesign work, not just deploy tools

Deployment is the easy part.

The harder question is how work, ownership, and judgment change once AI enters the process.

Many organisations still behave as if rollout equals impact.

A tool is launched, a use case is approved, and usage is measured, but the underlying workflow remains largely untouched.

In that model, AI improves activity around the edges while the actual customer, employee, or student experience changes very little.

The more useful question is what work should look like after AI enters the process.

Who owns the outcome. What judgment still matters. What task disappears. What gets done earlier.

What improves because the workflow was rebuilt around new capability rather than simply supplemented by it.

Danny Liu, Professor in Educational Technologies at University of Sydney, offered one of the clearest answers.

His work showed that adoption improves when people are given safe ways to experiment and enough control to shape how AI supports their own context.

The value in those examples was not the tool itself. It was the redesign of how support, learning, and engagement could happen at scale.

Staff were able to see AI as an extension of their role rather than a system imposed on them from outside.

That distinction matters because it changes behaviour. People engage more deeply when they can shape how the technology supports the work they are already accountable for.

David Scott, Chief Data and Analytics Officer at University of Sydney, made the executive version of that same point.

He argued that AI enabled work should sit with the business process owner, rather than the data team or the technology team.

Technical teams can enable, build, and support, but they should not end up owning business outcomes they do not control.

If the people closest to the process do not own the workflow, then the AI initiative remains detached from real operating change.

David also grounded value in metrics the institution already trusts, including student satisfaction, student success, and early intervention.

That is a stronger way to measure AI because it ties adoption to operating performance rather than novelty.

This is also where leaders need to get more direct about workforce change.

If AI is going to alter the shape of work, then roles, responsibilities, and team structures need to move with it.

Katarina raised that directly in her discussion of claims roles.

Jen showed that broad adoption at CBA depends on leaders visibly using AI themselves, building capability, and tracking how people are engaging with the tools.

Danny showed that experimentation grows when the environment feels safe enough for people to explore.

Those are not supporting issues. They are part of the operating model itself.

A real divide is opening up here. Some organisations are still deploying tools into largely unchanged environments and calling that progress.

Others are using AI to rethink workflows, clarify ownership, redesign roles, and reshape how services are delivered.

The second group is where durable value will come from.

Their advantage will not come from having access to better models alone. It will come from being willing to change the business around those models.

Recommended actions for data & AI leaders

If AI is going to move beyond pilots and presentations, leaders need to make a small number of operating decisions early and follow through on them consistently.

- Treat AI as an operating model decision

Define where AI should change how the business runs, where decisions should move faster, and where work needs to be redesigned across the value chain. - Put business owners on every major AI initiative

Assign a named business owner responsible for outcomes, adoption, and workflow change, rather than leaving accountability with technical teams alone. - Build governance into delivery, not beside it

Make governance visible in decision rights, approvals, data stewardship, and role accountability, not only in policy documents or review forums. - Redesign roles before resistance hardens

Identify the teams and job types most likely to change, then involve HR, operational leaders, and frontline managers early enough to shape the transition. - Measure AI through business outcomes

Only 13% of CIOs say articulating ROI is their biggest challenge in securing AI budgets.Tie value to metrics the organisation already trusts, such as customer satisfaction, claims resolution, conversion, margin, risk reduction, student success, or workforce efficiency. The harder task is usually not asking for budget. It is linking AI investment to operating outcomes the business already believes in. - Create repeatable ways of working

Move beyond isolated pilots by building shared methods, standards, and reusable patterns that let AI scale across the business.

The strongest message from Data and AI Edge was that AI strategy rises or falls on the operating model beneath it.

Leaders who keep treating AI as a technology rollout will keep getting fragmented results.

Those who use it to redesign ownership, governance, workflows, and roles will give themselves a far better chance of turning momentum into enterprise value.