Agentic AI is forcing leaders to rebuild the business from the inside out

In this ADAPT Insider podcast episode, Claudine Ogilvie, Mark Cameron, and Brett Raven examine why AI adoption is pushing organisations to redefine risk appetite, ownership, and operating discipline before it can scale.

Key takeaways:

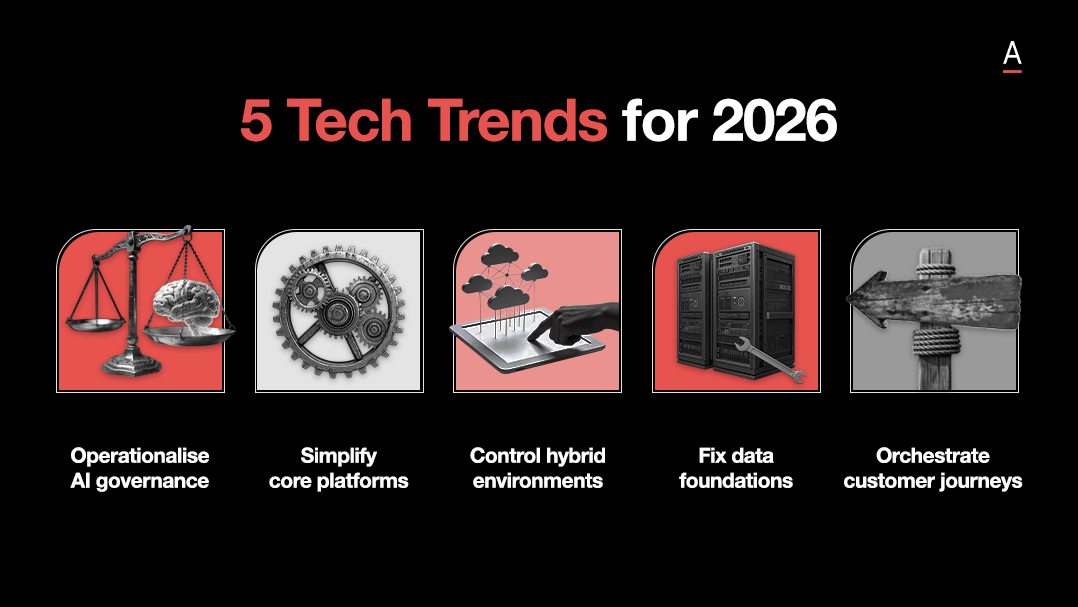

- Boards are moving from broad AI guardrails to clearer risk appetite. Leaders are being forced to define where experimentation is encouraged, where tighter controls apply, and how much downside the organisation is willing to tolerate.

- The strongest AI programs start with a business problem. Fear of missing out is still driving poor sequencing, with many organisations choosing tools first and searching for value later.

- Agentic AI is turning governance into an operating model issue. As AI takes action across workflows, leaders need clearer ownership, stronger controls, and explicit accountability for outcomes.

Boards need clearer risk appetite as AI moves deeper into the business

AI is becoming a board level issue because it is starting to shape business value, operating models, and enterprise risk.

Broad guardrails were a useful starting point, but they are no longer enough.

Claudine argues that boards are now moving towards clearer policy and more explicit risk appetite.

That matters because leaders need to define where experimentation is encouraged, where tighter control is needed, and how much downside the organisation is prepared to tolerate.

As AI becomes more embedded in the business, governance has to become more specific and more actionable.

The fastest way to waste AI investment is to start with the tool

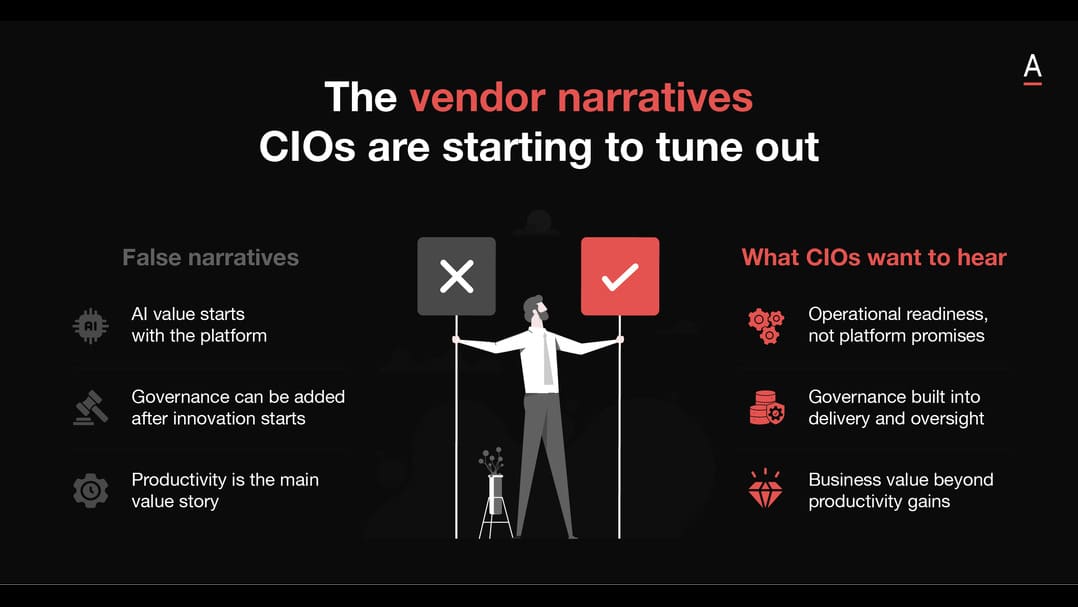

Many organisations are still approaching AI backwards, choosing the platform first and searching for the use case later.

Brett warns that this approach is usually driven by fear of missing out rather than clear strategy.

His argument is simple, start with the business problem, define the value to be created, then assess whether AI is the right fit.

That discipline is what separates scattered experimentation from meaningful adoption.

Agentic AI is turning governance into an operating model issue

Once AI starts acting across workflows, governance becomes much more than a policy discussion. It becomes a question of ownership, access, supervision, and performance.

Mark says leaders need to focus less on chasing every technical update and more on how AI is changing the structure of work itself.

In practice, that means defining the role an agent is performing, the boundaries around its actions, and who remains accountable for its outcomes.

As agentic AI spreads, organisations will need stronger controls and much clearer operating discipline.

The organisations that move fastest will be the ones with the strongest discipline

As AI moves from experimentation into execution, the organisations that pull ahead will be the ones that treat governance, ownership, and operating discipline as core parts of adoption, rather than problems to solve later.