AI creates more value when guardrails open it up to everyone, says EBOS Group’s Chief Data Officer

In this ADAPT Insider episode, Artak Amirbekyan explains why cheaper, more accessible AI should expand use across the business, provided the guardrails are strong enough to protect trust, risk, and accountability.As AI moves from specialist teams into everyday business use, data leaders have to focus less on scarcity and more on safe, scalable adoption across the organisation.

Artak Amirbekyan, Chief Data Officer at EBOS Group, argues that this shift should not be resisted. Wider use is where the value comes from.

The real task is making that use safe, deliberate, and broad enough that AI becomes part of everyday capability rather than a specialist function sitting off to the side.

Key takeaways:

- AI creates more value when more people can use it, not fewer. The challenge is to widen access without losing control of risk, data, or accountability.

- Guardrails are what turn democratised AI from chaos into value. Restrict the wrong data, set clear rules, and open the tools up safely across the business.

- AI capability is becoming a baseline skill, not a specialist niche. People with AI fluency are gaining an advantage over people without it, which makes learning and adoption everyone’s responsibility.

Cheaper AI changes who gets to create value

AI becomes more powerful inside organisations when access stops being limited to specialists.

As costs fall and tools become easier to use, more people can experiment, solve problems, and create value directly in their own roles.

That is how Artak explains the current moment.

He says large language models existed before tools like ChatGPT, but they were expensive, specialist, and largely inaccessible.

Once the technology became cheaper and easier to use, adoption surged. For him, that is the pattern leaders should expect.

When AI gets cheaper, more people use it, and that wider use is where much of the return starts to appear.

Guardrails make wider AI use possible

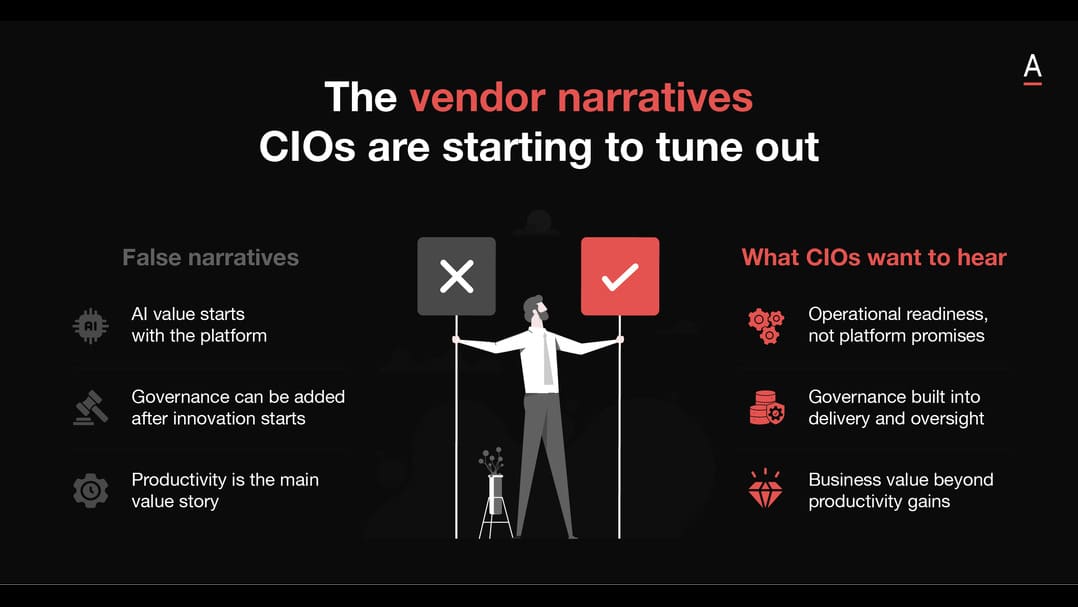

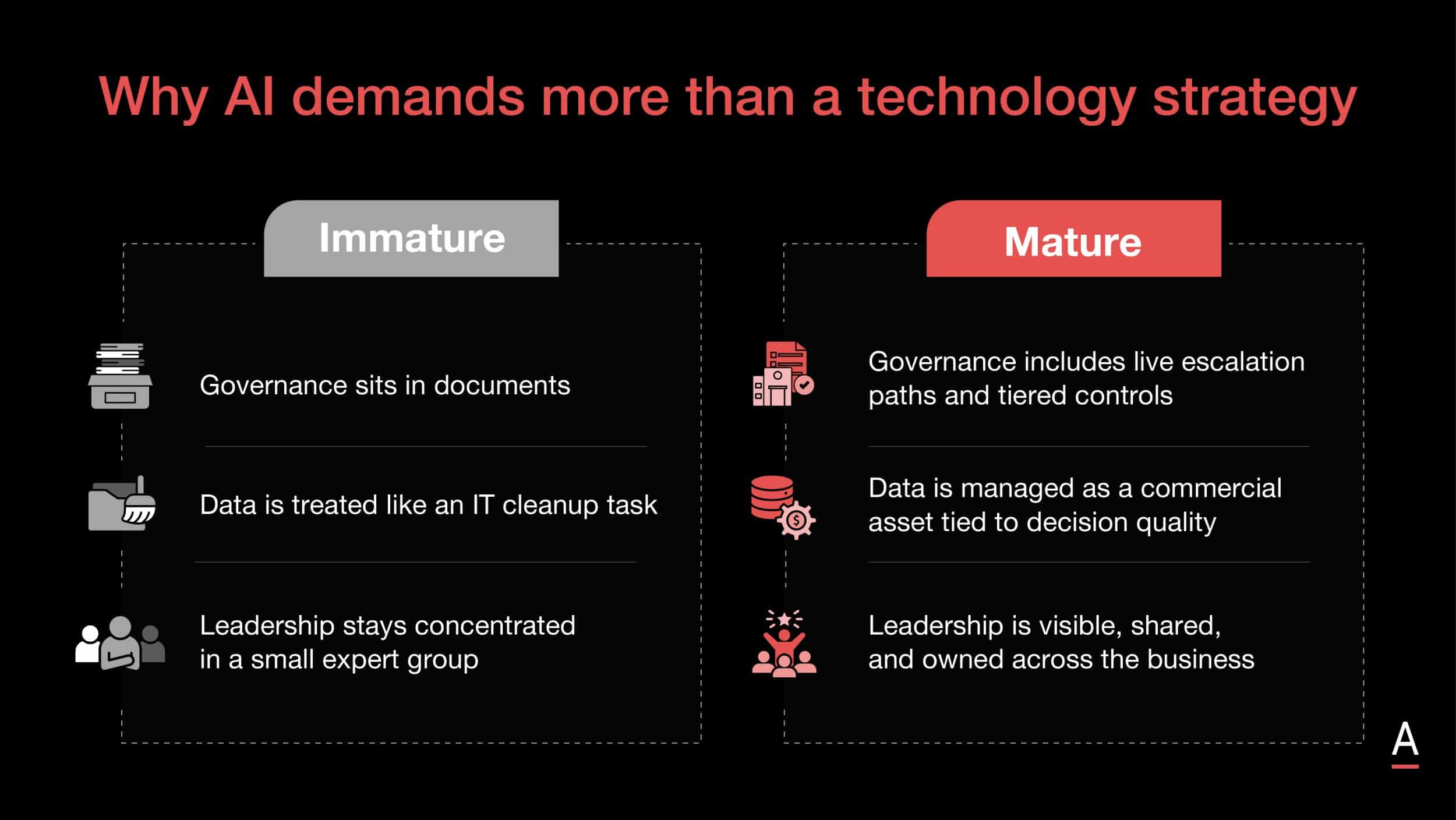

Democratised AI only works inside a business when the organisation is clear about where risk sits and what cannot be compromised.

The answer is not to lock everything down. It is to put enough guardrails in place that more people can use the technology safely.

Artak says companies need practical controls around what data can be shared, what must stay inside the organisation, and which protections need to sit around the models being used.

He talks about restrictions on data leaving the business, firewalls, and protections such as indemnity requirements, but the broader point is more important.

Guardrails should increase safe usage, not reduce it. In his view, organisations get more value from AI when they enable more people to use it within clear boundaries.

AI fluency is becoming everyone’s responsibility

AI capability is moving out of the specialist domain and into general business capability.

That changes both workforce expectations and the role of technical experts.

Artak argues that AI is now everyone’s domain because the people with AI skills are starting to outpace those without them.

He also notes that this changes the shape of data and AI roles themselves.

Where companies once searched for rare technical specialists who could both build models and speak to the business, they are now increasingly enabling business users to work with AI directly.

In that environment, data scientists and machine learning engineers spend more time enabling use than building everything themselves.

Learning how to work with AI is becoming part of staying effective at work, not a side topic for a small technical group.

The interesting shift is not simply that AI has become mainstream.

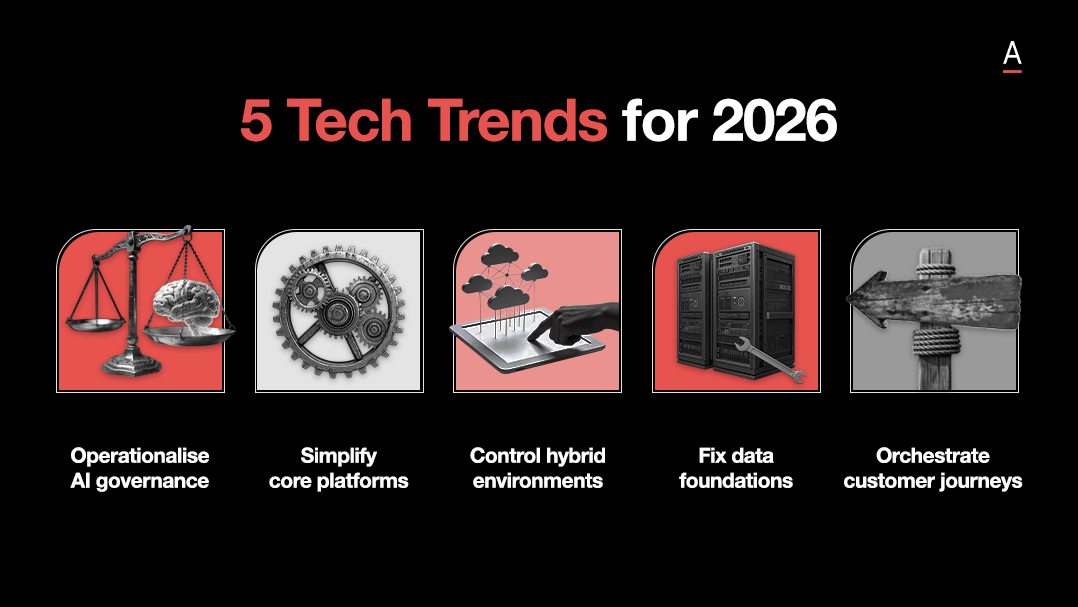

It is that AI is becoming cheap enough, broad enough, and useful enough that companies now need to govern widespread use, not specialist scarcity.