Why AI demands more than a technology strategy

Australian executive teams have largely moved past the question of whether AI matters. The harder question is whether their organisations are ready to redesign themselves around it.

AI is forcing executive teams to confront whether their organisations are built to absorb it.

At ADAPT’s 29th CIO Edge in Sydney on 24 February, 2026, more than 220 CIOs and senior technology leaders from across Australia and New Zealand came together to examine AI governance, data foundations, cyber security, and ROI discipline.

Across the event sessions, AI emerged less as a technology story and more as a test of enterprise maturity, exposing how decisions are made, how accountability is assigned, how governance functions in practice, and how future capability will be built.

The challenge is no longer adoption alone. It is whether leaders can reshape the enterprise around new forms of execution, oversight, and learning.

That is what makes this moment more demanding than earlier waves of digital transformation.

AI reaches into workflows, customer interactions, internal decisions, and capital allocation at the same time.

It exposes weak data, unclear ownership, inconsistent governance, and hesitant leadership.

That pressure is visible in investment conversations as well.

Only 13% of CIOs say articulating ROI is their biggest challenge in securing AI budgets, which suggests the harder problem is not describing the prize.

It is proving the organisation can capture it in a controlled, scalable way.

The organisations that move furthest will not be the ones with the most pilots or the widest toolsets.

They will be the ones that use AI to sharpen operating discipline, clarify ownership, strengthen management capability, and rethink how people and systems work together.

1. AI is exposing weak operating models, not just weak technology stacks

Gabby Fredkin, Head of Insights and Analytics at ADAPT, frames the first issue clearly.

Once AI starts shaping customer interactions, internal decisions, and workflow execution, weak foundations stop looking like technical imperfections.

They become constraints on speed, service quality, and value creation.

Poor data, fragmented processes, and inconsistent ownership do not stay buried in the background.

AI brings them to the surface fast.

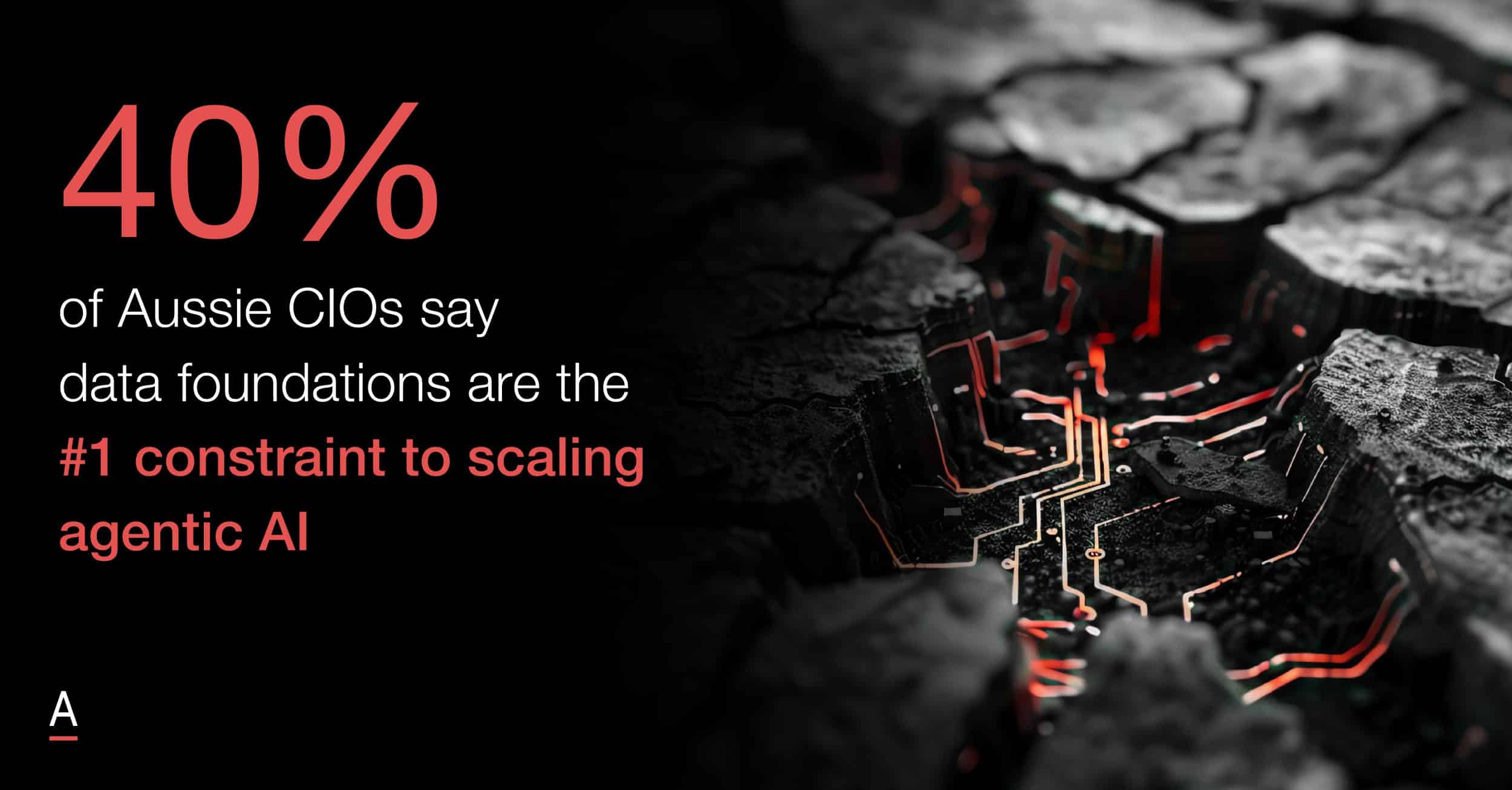

That is why data quality can no longer be treated as a technology clean up exercise.

Data fragmentation now limits customer context, workflow quality, and decision quality at the same time.

If the organisation cannot trust its inputs, it cannot scale outputs with confidence.

The real bottleneck is often the operating model underneath the tool, not the tool itself.

That becomes even harder to ignore when 40% of Aussie CIOs say data foundations are the #1 constraint to scaling agentic AI.

Simon Reiter, Chief Technology Officer at CareSuper, brings that point into practical terms.

In his use case exercise, some of the pain points that emerged did not turn out to be real AI opportunities.

They were process fixes and education issues.

They were examples of internal friction that should have been addressed before AI ever entered the picture.

That distinction matters.

It shows how easily AI can become a label attached to friction that already existed.

Some of the strongest AI opportunities begin with workflow redesign, not tool selection, because the real value lies in fixing how work moves before trying to automate it.

In the CIO Edge panel featuring Jeremy Hubbard, Chief Technology and Data Officer at Rest, and Aarti Joshi, Chief Information Officer at Department of Customer Services, they both push a progress over perfection approach.

Jeremy argued that weak data foundations should not delay practical AI use cases.

At Rest, AI is already automating contact centre call wrap up at scale, delivering immediate efficiency gains while broader data maturity continues.

Aarti made the same case from a public sector perspective, saying organisations should not wait for perfect conditions.

They should start with contained use cases, measure value, and strengthen governance and data in parallel.

Start with contained, measurable use cases, prove value quickly, and improve the foundations in parallel.

That is a more mature path than waiting for perfect conditions or scaling too early.

AI rarely fixes a weak operating model but rather amplifies it.

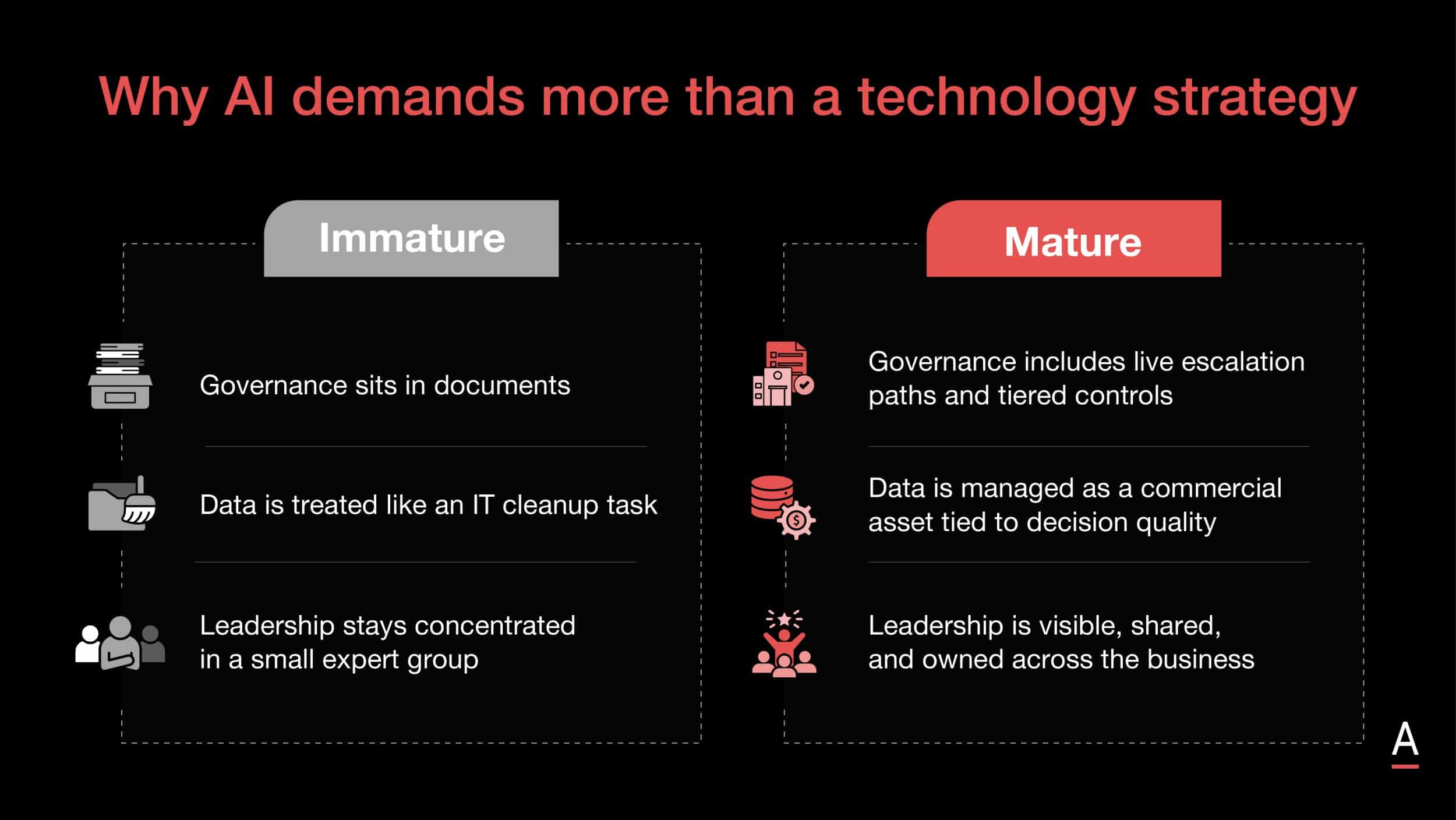

2. AI governance has to become operating infrastructure, not policy theatre

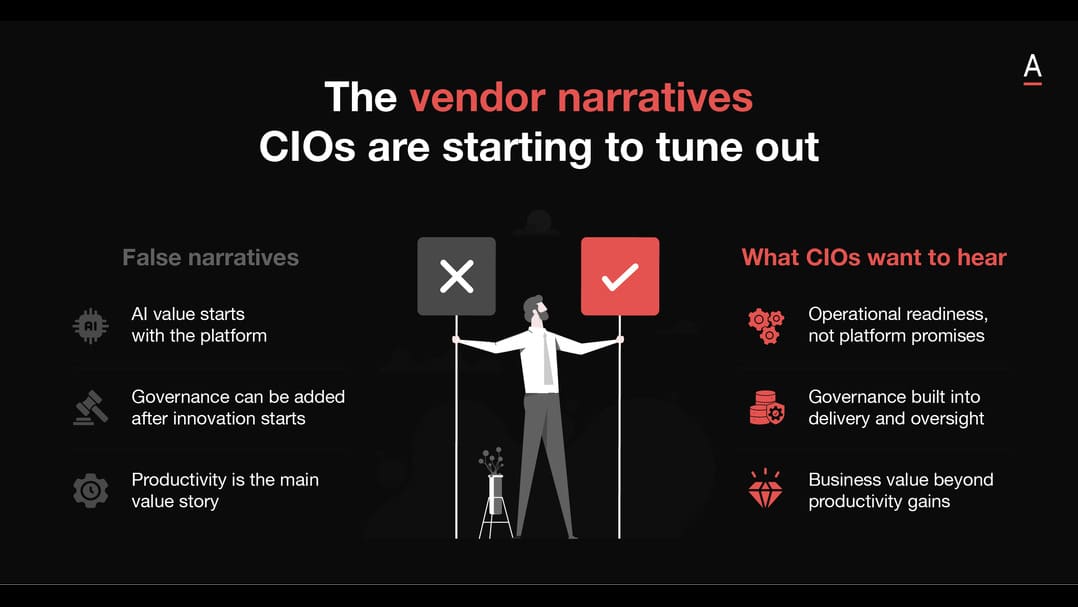

The second theme is where many organisations still mistake intent for readiness.

Governance only matters when it is built into approvals, escalation paths, risk tiers, and day to day decision making.

Beyond that, it becomes policy theatre, language that signals responsibility without giving teams enough clarity to act.

The maturity gap is still wide.

Only 7% of Aussie CIOs say their organisation has enterprise wide AI governance with board involvement, which helps explain why governance has moved so quickly from policy language into board, risk, and operating conversations.

Gabby’s Zillow example captures the problem well.

The failure was not simply about model risk.

It was about weak oversight, unclear intervention points, and poor visibility across broader exposure.

That is why governance failures are scaling failures.

The real question is not whether an organisation has published principles.

It is whether people know when to step in, who owns the call, and what level of autonomy a system should have in practice.

Governance maturity means defining when humans intervene, not repeating familiar oversight language.

Arul Arogyanathan, Chief Information Officer at Village Roadshow, offers one of the clearest operating responses.

His model of centralised governance with federated execution recognises that coherence and speed both matter.

Guardrails need to be set centrally, but the business teams closest to the work are often best placed to identify value.

Democratising AI works better than leaving transformation to a small central team, provided the guardrails are strong.

That is where tiered controls matter.

A low risk internal assistant should not face the same approval burden as a customer facing system with reputational or compliance consequences.

The ADAPT Advisor roundtable extends that argument into leadership.

Mark Cameron, CEO and Director, Alyve, Claudine Ogilvie, CEO at HivePix and Former CIO at Jetstar, and Brett Raven, Fractional CTO and CIO at The Consulting CIO, all point to the same conclusion.

Brett argues that leaders need to stop jumping to the tool and work backwards from business strategy, business levers, and business capability.

Claudine makes the case for much more specific board level risk appetites and a clearer definition of what success looks like.

Cameron pushes the discussion beyond short term efficiency and into long term organisational capacity, where the real governance question becomes whether the business is being set up to succeed in a world shaped by AI.

Boards need a clearer risk appetite, leaders need to start from the business problem rather than the tool, and literacy has to be continuous because AI is moving faster than most governance habits were built to handle.

3. The bigger Australian AI barrier may be leadership culture, not technology maturity

The third theme goes to the heart of the problem.

Many Australian organisations understand the AI opportunity well enough.

Their bigger constraint may be the culture in which strategic decisions are made.

The barrier is often less about access to technology and more about risk appetite, growth confidence, and ownership of change.

Maile Carnegie, Innovation and Growth leader, makes that point sharply in conversation with Alan Thorogood, Research and Engagement at MIT CISR.

Her comparison between Australian and American public companies is really an observation about operating conditions.

Meaningful AI transformation requires courage, capital, coherent data, strong workflows, and access to talent.

Many Australian organisations are still shaped by a bias towards stability, tighter tolerance for uncertainty, and stronger pressure for near term certainty.

Under those conditions, awareness of the opportunity does not always translate into willingness to fund or back the redesign it requires.

That cultural caution also shows up in how transformation is owned.

Arul argues against concentrating AI responsibility in a small expert group.

When ownership stays too centralised, the organisation delays learning and treats AI as a side programme instead of a business shift.

His broader point about the CIO role moving from technology manager to business architect matters here.

AI is pushing technology leadership closer to core business design because decisions about systems, workflows, customers, and growth are becoming harder to separate.

Gabby adds another important warning.

As AI automates more junior work, organisations risk hollowing out the layer where future managers and leaders once learned judgement.

That makes capability building a much bigger issue than training alone.

Simon’s perspective reinforces the same point from a change perspective.

Trust grows when leaders use AI visibly, explain where it fits, and create room for experimentation.

It grows when leaders model use visibly, explain where AI fits into real work, and create enough psychological safety for people to test, question, and learn.

That is why Australia’s AI challenge is increasingly a leadership one.

Technology maturity still matters, but leadership maturity is becoming the deciding factor.

Actions to take forward

The question for Australian executive teams is no longer whether they have an AI strategy.

It is whether the organisation is structurally ready to absorb AI into how work gets done, how risk is managed, and how capability is built.

- Treat data as a commercial asset tied directly to customer context, workflow quality, decision quality, and enterprise value, rather than as a clean up programme delegated to technology teams.

- Start with the workflow and the business problem before selecting tools, so that AI use cases emerge from real operational redesign rather than surface level experimentation.

- Focus early effort on narrow, measurable, high frequency use cases that can prove value quickly while exposing the process failures those pilots bring to light.

- Build governance into live execution through approvals, escalation paths, intervention thresholds, accountability design, and risk tiers, rather than relying on policy decks and generic oversight language.

- Use centralised governance with federated execution, so business teams closest to the work can help shape value while strong guardrails preserve consistency and control.

- Define risk appetite with greater specificity at board and executive level, so teams know where the boundaries sit and can move faster inside them.

- Lift board and executive literacy continuously, recognising that AI is changing faster than traditional leadership education cycles and now affects operating design as much as technology choice.

- Reassess how capability is built across the organisation as junior work is automated, so learning pathways and future leadership pipelines are strengthened rather than hollowed out.

- Make trust a leadership task by modelling AI use visibly, investing in change management, and creating enough safety for experimentation, adaptation, and learning.

The organisations that move furthest will be the ones that treat AI as a test of enterprise design.

They will use it to sharpen operating discipline, clarify ownership, raise management capability, and rethink how people and systems work together.

Those are the shifts that will separate incremental adopters from organisations that genuinely change how they grow.