Australian CIOs are operating in a market where access is limited and attention is scarce.

Buyers are spending just one hour a month meeting new vendors, which raises the bar on every conversation from the outset.

It is a tougher environment to win attention in, and an even tougher one to hold it.

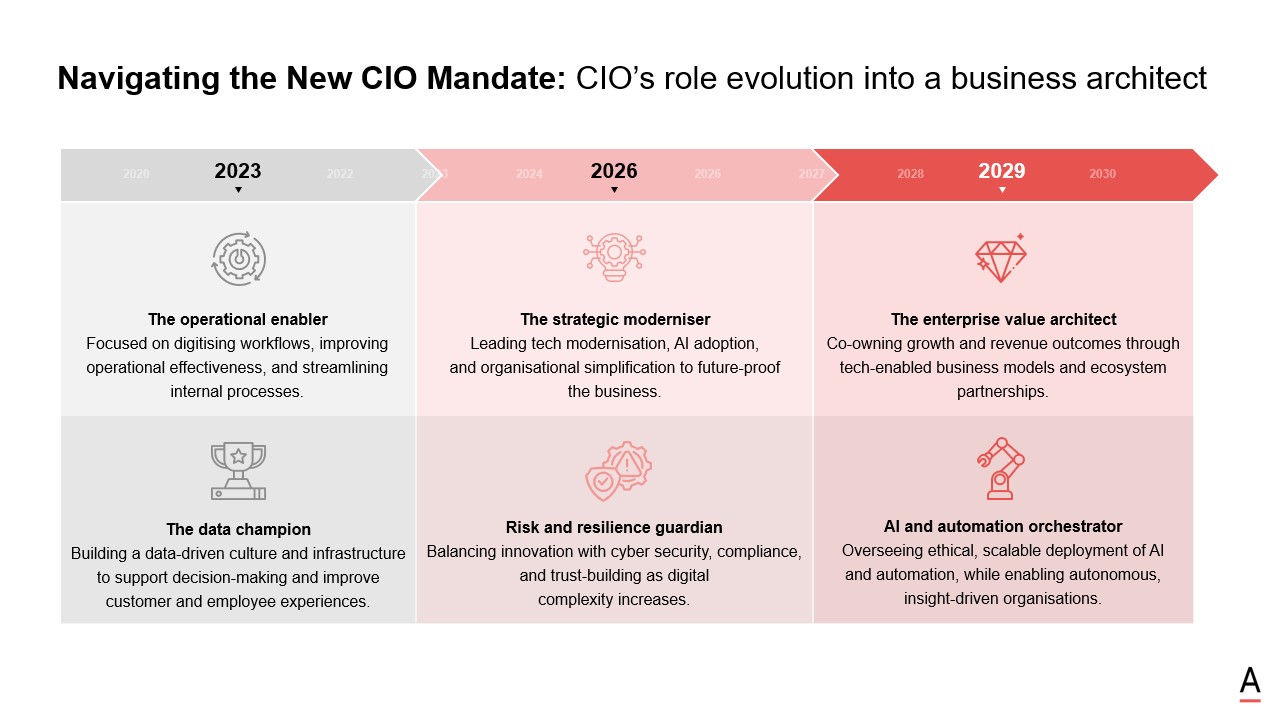

That pressure is landing at the same time as the CIO role itself is expanding.

In this recent ADAPT Analyst Market Briefing, Gabby Fredkin, Head of Analytics and Insights at ADAPT, unpacked what that shift looks like in practice, drawn from ADAPT’s CIO Edge survey of 176 respondents, representing 25% of Australia’s A$1.9t GDP and 11% of the Australian workforce.

The new CIO mandate shows the role moving beyond operational enablement and into strategic modernisation, enterprise value creation, resilience, and AI orchestration across the business.

The briefing showed how those pressures are beginning to shape CIO priorities and decisions.

The video above is just an excerpt. Access the full session recording.

AI is exposing the gaps organisations have been able to work around

Gabby argued that AI is rarely moving through a clean sequence of pilot, deployment, and scale. Inside most organisations, it follows a rougher cycle: buy it, use it, break it, fix it.

That is a more accurate picture of enterprise AI in 2026 than the tidy maturity curves many vendors still default to.

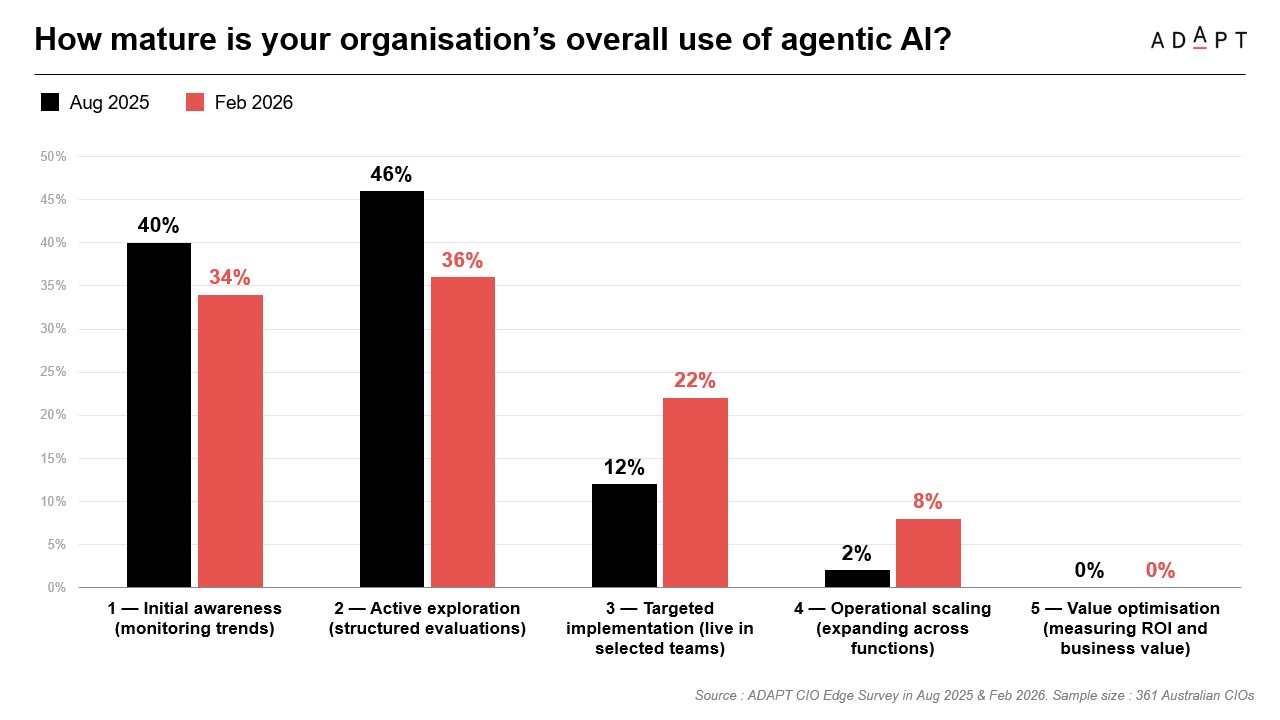

ADAPT’s CIO Edge data shows organisations moving out of early awareness and active exploration into targeted implementation and early operational scaling.

In August 2025, 40% were still at initial awareness and only 2% had reached operational scaling.

By February 2026, initial awareness had dropped to 34%, targeted implementation had lifted to 22%, and operational scaling had risen to 8%.

Value optimisation, however, was still sitting at 0%. AI is entering live workflows faster than most organisations can govern, measure, and redesign work around it.

Gabby’s broader point is that AI is exposing weaknesses that were already there. Some sit in the stack.

Many sit in process, ownership, culture, and leadership alignment.

Once AI starts interacting with live workflows, those weaknesses are harder to mask.

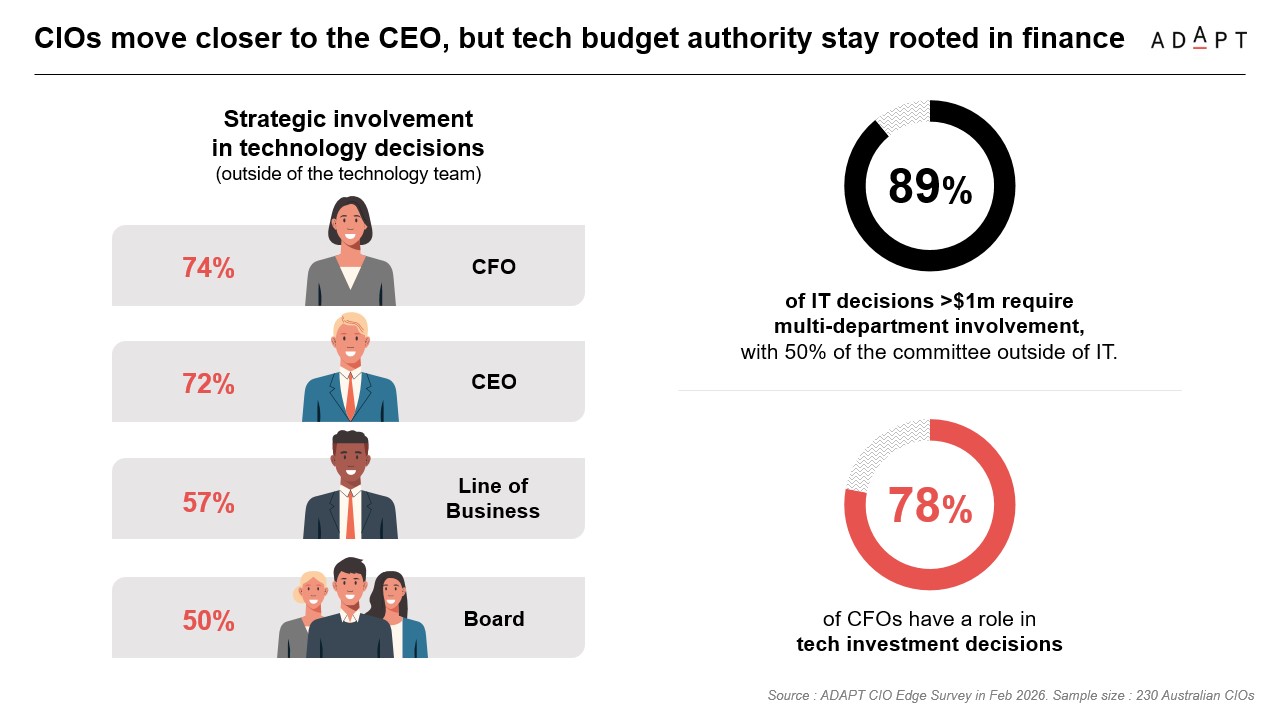

It also reinforced how much broader the decision landscape has become, with 89% of IT decisions above $1 million requiring multi department involvement and 50% of that committee sitting outside IT.

Governance and data quality are now the main blockers to scale

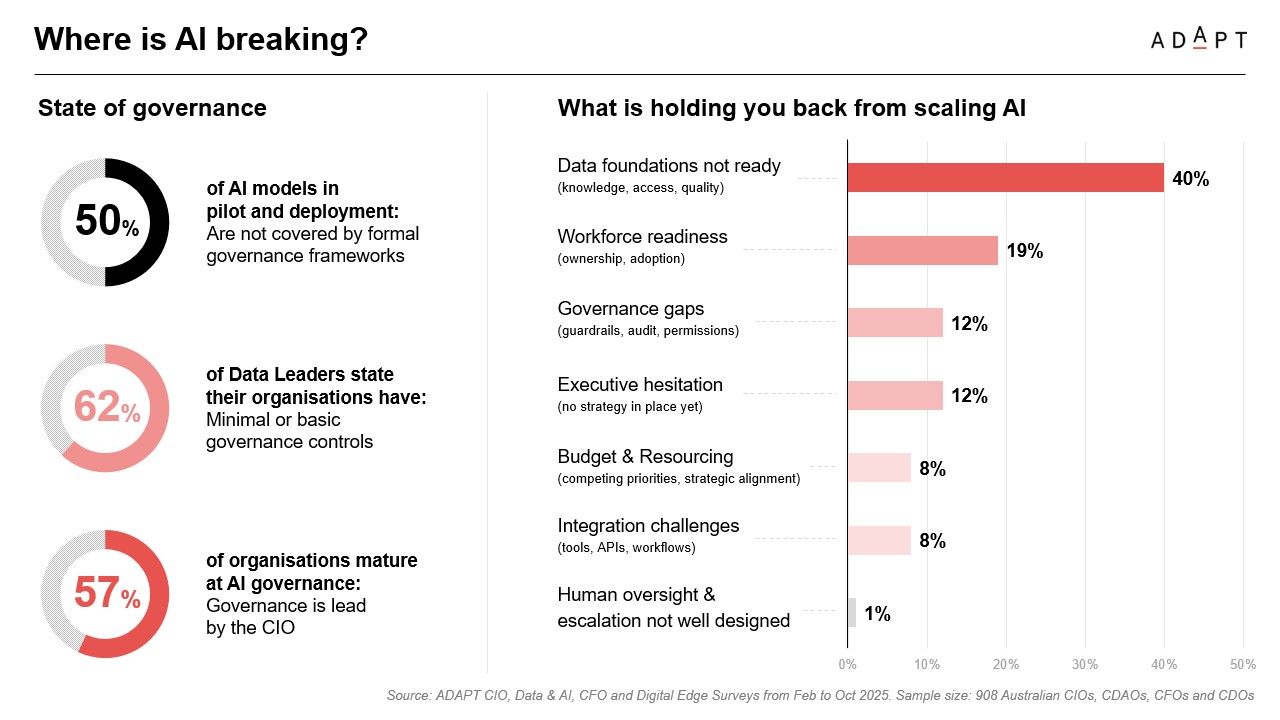

The strongest signal in the briefing was that governance has become a scaling issue, not a side conversation about risk.

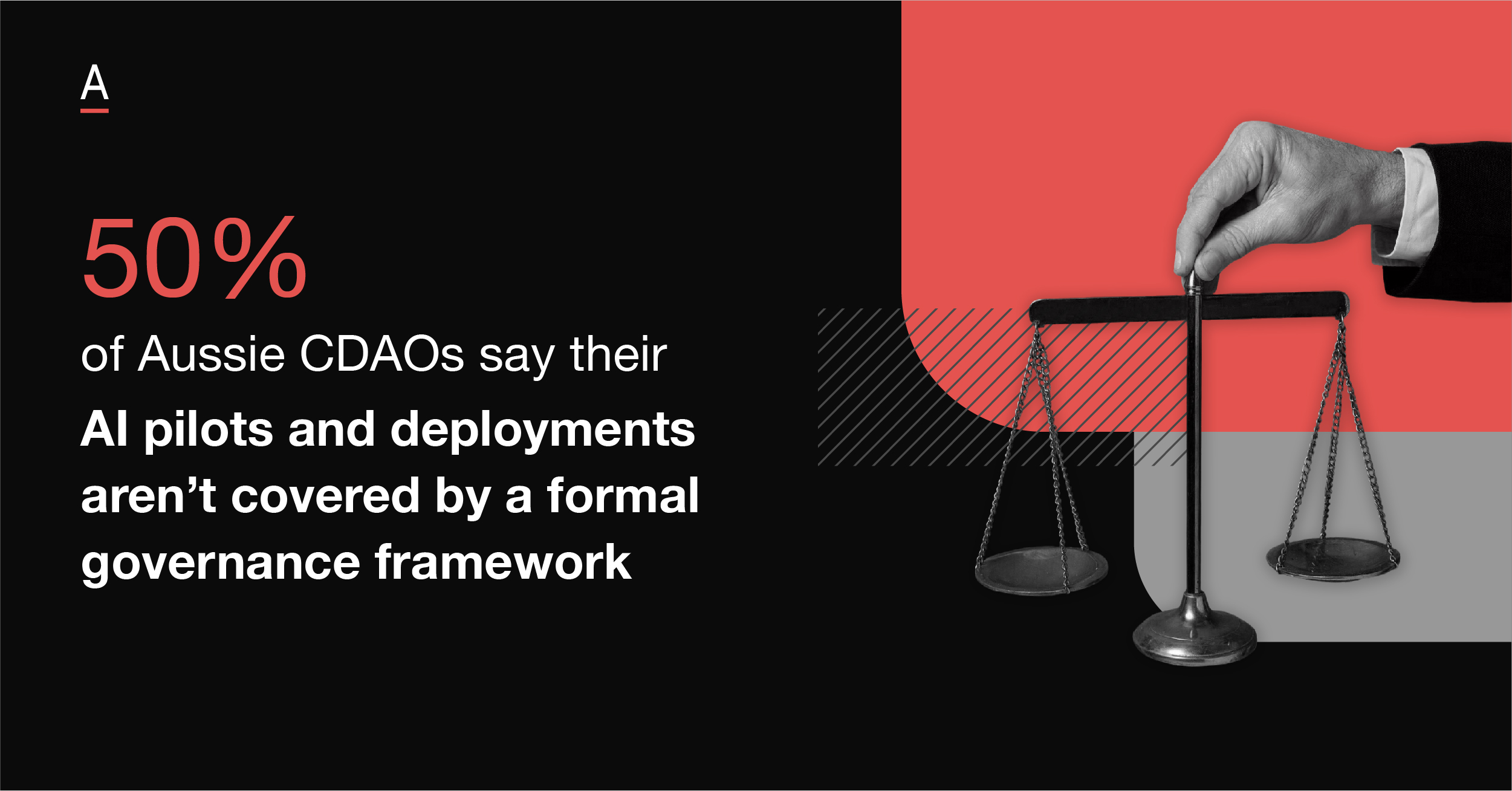

Half of AI models in pilot and deployment are still not covered by a formal governance framework, while 62% of data leaders say their organisations have minimal or basic governance controls.

When ADAPT asked what is holding organisations back from scaling AI, data foundations not ready ranked first at 40%, followed by governance gaps at 19% and workforce readiness at 12%.

Gabby made the distinction clearly in the session. This is not a compliance statistic. It is a scaling statistic.

If governance is built into the groundwork, organisations can move models from experimentation into production with clearer controls, permissions, and evaluation.

If it is layered on afterwards, every successful use case creates another argument around risk, ownership, and accountability.

He also separated data governance from AI governance. Good data governance produces high quality data.

AI governance has to operate in real time, inside live environments, where organisations can test whether outputs are trusted, accessible, and consistent.

That pressure is landing heavily on CIOs.

In organisations with stronger AI governance maturity, 57% still have governance led by the CIO.

At the same time, AI is moving into finance, HR, procurement, supply chain, marketing, and sales. Once it spreads that far, the risk cannot sensibly sit with one executive.

Legacy environments and weak operating discipline are making AI harder to operationalise

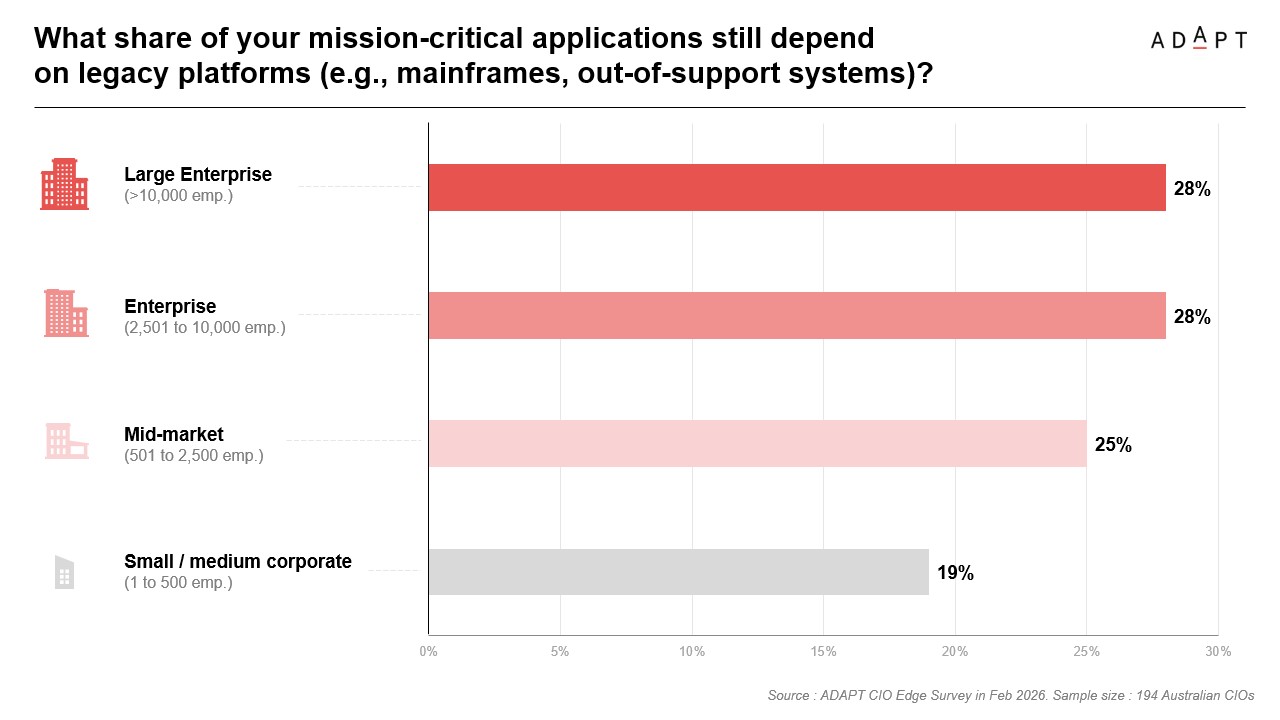

The briefing also showed why AI conversations keep running into modernisation constraints.

A meaningful share of mission critical applications still depend on legacy platforms across every organisation size, ranging from 19% in large enterprise to 28% in mid market and small to medium corporate environments.

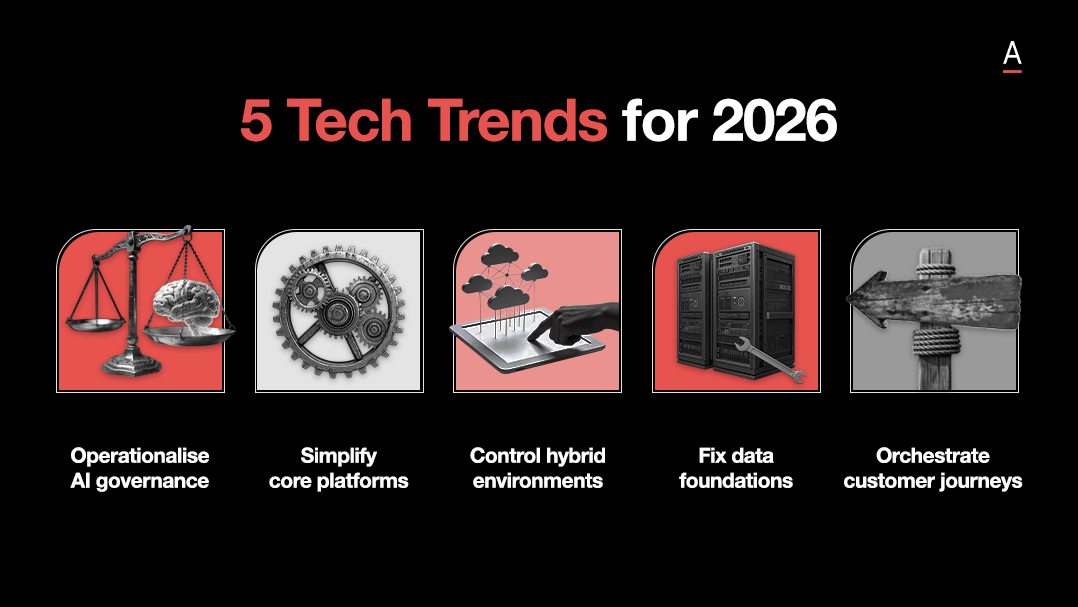

The top upgrade priorities for the next one to two years are enterprise systems, data warehouses and lakes, and digital identity and zero trust.

Gabby’s commentary sharpened that picture.

Many of these systems still support core business operations, even if they no longer support the speed, integration, and visibility modern AI use cases require.

Bigger organisations are still carrying systems built in the 1990s and early 2000s.

They support the business, but they do not support modern digital experience.

That is why organisations are trying to uplift, segment, or work around them while also pushing AI into the environment.

He also cautioned against assuming that a new platform fixes a deeper operating problem.

Moving to a better system does not solve weak data quality, vague ownership, or poor process discipline if those issues are carried straight into the new environment.

The same logic applies to shadow AI.

Gabby noted that individuals are already building or using agents outside formal governance structures, partly because the tools are moving faster than internal controls.

That makes the environment harder to police and raises a harder question about where personal experimentation ends and enterprise responsibility begins.

The operating model will decide whether AI creates value

The most useful thread in Gabby’s insights is that AI success is becoming less about the tool itself and more about how the organisation is structured around it.

Anthony Saba made the same point in the session when he described a technology leadership team that spent months building an AI use case, only to find that the business cared about solving a concrete production cost problem instead.

The disconnect was not technical. It was operational.

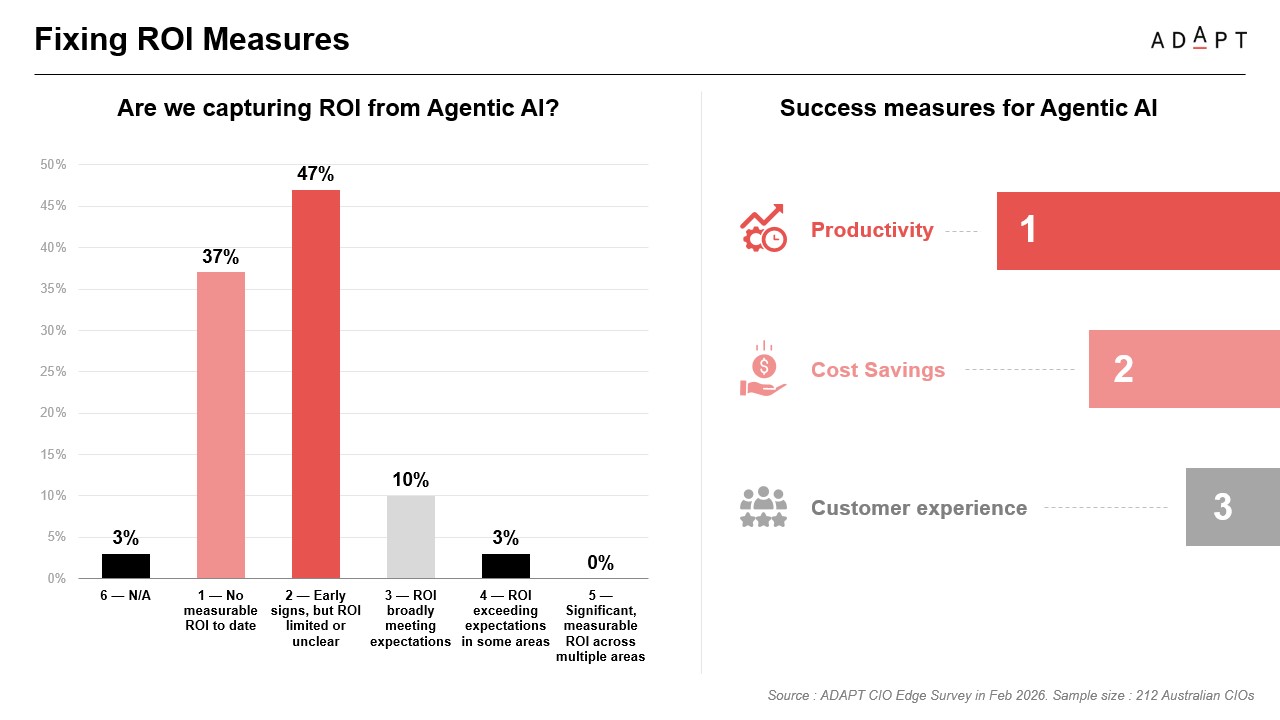

The ROI data makes the same point.

Only 10% said ROI was broadly meeting expectations, and just 3% said it was exceeding expectations in some areas.

No one reported significant measurable ROI across multiple areas.

The top success measures being used were productivity, cost savings, and customer experience.

Gabby’s challenge was simple.

If organisations had clearer measures in place and knew what they wanted AI to deliver, they would be in a stronger position to judge value.

He also pushed the harder point that time saved only matters if it is redirected into better outcomes.

If AI gives people hours back and those hours simply become more meetings, the tool may be working while the operating model stays unchanged.

Recommended actions for technology vendors

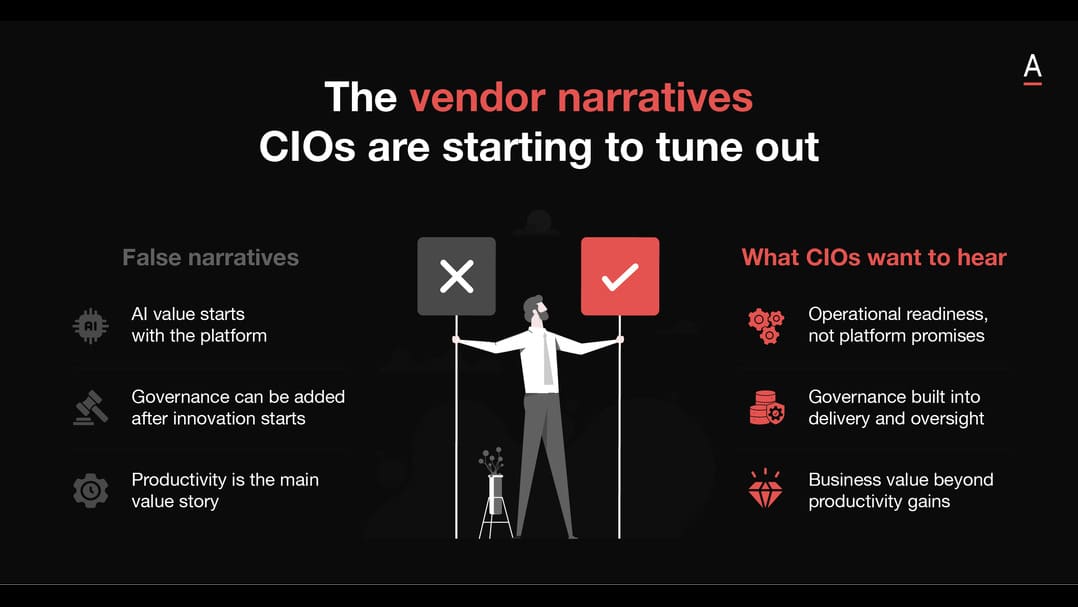

Vendors targeting CIOs need to respond to this shift with more discipline.

- Lead with organisational readiness, not tool novelty. CIOs are already seeing enough AI capability claims. The stronger conversation is about where governance, data quality, and ownership will break under scale.

- Tie AI to a real operating problem. Organisations lose momentum when AI is framed as a solution looking for a problem. Anchor the discussion in workflow friction, service bottlenecks, or decision latency first.

- Treat governance as a scaling enabler. The market is moving past broad responsible AI messaging. CIOs need help embedding controls, accountability, and escalation into live environments so pilots can move into production with less resistance.

- Push harder on measurement discipline. If ROI is still unclear for most organisations, that is an opening. Help leaders define what success looks like before rollout, including productivity, cost, service, and workflow metrics.

- Speak to the operating model. The strongest AI narrative in market now is about how teams, decisions, and processes need to change. Vendors that stay trapped in feature language will sound shallow compared to buyers wrestling with organisational redesign.

CIOs do not need more AI noise. They need help scaling AI without adding risk, complexity, or operational drag.

The vendors that win will speak to execution. They will show how AI can be governed in production, measured early, and deployed inside the reality of legacy systems, cross functional buying groups, and stretched teams.

The upside will not go to the loudest AI story.

It will go to the vendors that help CIOs move from experimentation to controlled scale, with clearer outcomes in productivity, cost, customer experience, and speed.

The video above is just an excerpt. Access the full session recording.