Why AI sovereignty is becoming a full stack operating issue according to former Data61 CSIRO MD and Sovereign AI Australia’s CEO

At Data & Edge, Dr Jon Whittle and Simon Kriss examine how AI sovereignty is becoming a practical question of control across data, models, inference, agents, and governance.AI sovereignty is becoming a live operating issue for governments and enterprises that depend on AI systems to deliver services, make decisions, and protect sensitive information.

In this Data & AI Edge discussion, Dr Jon Whittle, former Managing Director of Data61 at CSIRO, and Simon Kriss, CEO at Sovereign AI Australia, argue that sovereignty now shapes resilience, accountability, and economic value across the full AI stack.

Key takeaways:

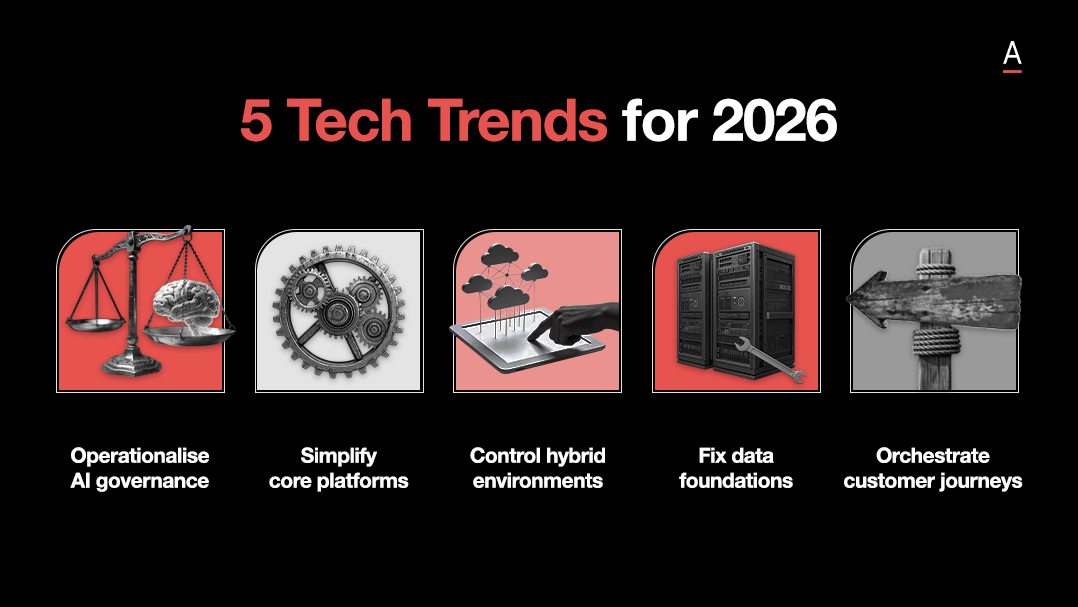

- AI sovereignty now spans data, models, inference, agents, governance, and applications, which makes full stack control a strategic issue.

- Offshore AI dependence is creating legal, ethical, and operational exposure that many organisations still underestimate.

- Australia still has room to build sovereign capability in the next wave of AI, especially where local governance, energy efficiency, and agentic systems will matter most.

Sovereignty reaches across the full AI stack

AI sovereignty now touches every layer of the system, from data and foundation models through to inference, agents, governance, and applications.

Organisations can no longer treat one protected layer as enough, because exposure in any one part of the stack can weaken control across the rest.

Dr Whittle and Kriss argue that partial sovereignty creates overconfidence.

Local infrastructure may help, but it does not answer the wider questions around who controls the models, where inference happens, how agentic systems behave, and whether governance reflects Australian law and values.

The issue is broader, deeper, and much closer to enterprise operations than many organisations still assume.

Offshore dependence is creating operational exposure

As more organisations rely on offshore models, infrastructure, and agentic tooling, sovereignty risk is moving closer to day to day operations.

Legal exposure, weak visibility into training data, value misalignment, and limited explainability all affect how confidently organisations can deploy AI in sensitive settings.

That concern grows as AI systems move further into decision support and autonomous action.

When critical services and high consequence workflows depend on external systems, control becomes harder to prove and accountability becomes harder to enforce.

Dr Whittle and Kriss place that risk in practical terms, covering national resilience, organisational trust, and the long term direction of economic value creation.

The next wave of AI capability is still open

Australia entered the large language model race late, but the next phase of AI capability is still being shaped.

That opens a narrower but still important opportunity to build local strength in newer areas such as neurosymbolic systems, world models, agent platforms, and governance frameworks aligned to Australian requirements.

Dr Whittle and Kriss frame sovereignty as a capability decision with long term consequences.

Organisations that invest now in local skills, models, governance, and ecosystem partnerships improve their resilience and optionality before pressure arrives through regulation, incident, or public scrutiny.

The window is still open, but it will not stay open for long.