AI token costs can spiral fast without budgets, controls, and business ownership, warns Transformation & AI Specialist Naren Gangavarapu

In this ADAPT interview, Naren Gangavaraput warns that AI token costs can escalate quickly without tighter budgeting, model governance, observability, and human override controls.AI specialist Naren Gangavarapu has warned against organisations treating the costs of consuming AI services the same way they manage traditional software licenses, comparing the potential for AI tokens burning money to that of a poker machine.

Naren, who previously led a large tech modernisation program at Northern Beaches Council in Sydney, tells ADAPT that organisations need clear visibility into their use of AI tokens, the basic units of data that AI models use to process and generate text, images or audio content.

Users are charged based on the number of tokens processed by large language models (LLMs).

To keep AI costs manageable as tools are deployed at scale, Naren says organisations need to recognise that token consumption occurs with every interaction, effectively every ‘keystroke’.

Enterprises that don’t make this distinction could end up spending huge amounts of money that they can’t claw back, he says.

“You need to know how many tokens you’re buying and how many tokens are being consumed. This is very important.

“Basically, your tokens have to be budgeted per use case, and you have to make sure that you ‘take stock’ on a daily or weekly basis”, he says.

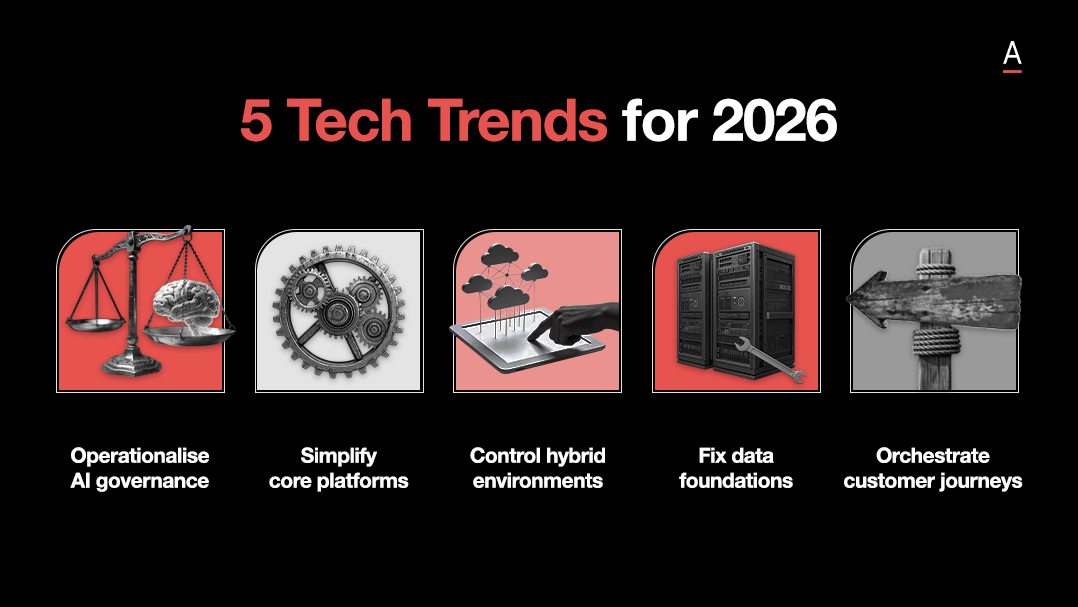

Costs can also be controlled through organisational policies that govern AI model selection, ensuring prompt workflows use models that are aligned and behave as expected.

Policies should define when more complex, and more expensive models should be used, versus when simpler direct prompts and cheaper models are sufficient, he says.

“You need to have business ownership of these budgets so that [the] technology [group] doesn’t overspend because they get excited about building models and might end up giving a huge bill to the business.”

Agents need a ‘control plane’

The bulk of Australian CIOs surveyed by ADAPT say they are investing in AI agents, but many remain in the initial awareness and active exploration stages.

As of February 2026, 22% said they had targeted agentic implementations live in selected teams (up from 12% last August), while only 8% are scaling agents across their operations.

Naren says many agents can be turned on and deployed quickly across organisations, often faster than the process of hiring staff and navigating governance requirements.

He says agents without governance are experiments but those with a control plane become a platform. He compares this to how an air traffic control centre, which provides visibility for aircraft leaving and landing at an airport.

“[With agents], you need to know what you can control. What can the agents do and what can’t they do? Then you need to build observability, which at any point tells you what’s happening.

“There are incidents where agents have been trained to do something and they have gone and deleted databases. Say that’s happened and you have to explain…what’s happened [to the organisation]. There has to be auditability; this means you have to be observing it [the agent] in real time.

“And then the governance part is put on top…the policies on which models are used…and what data an agent has been approved to access”, he says.

Naren highlights that while most enterprises have ‘systems of record’ or knowledge in their core applications, intellectual property (IP) concerns are often preventing AI capabilities from being integrated into these platforms.

Instead, data is staged in platforms like Databricks and Snowflake, with strict access controls applied.

“Then you give selective control to agents in data stages so that your core is protected. That way, your security and IP access is manageable”, he says.

Finally, an intervention layer – which monitors, intercepts and regulates the actions of autonomous agents – provides a ‘kill switch’ that allows humans to override agentic processes.

“If we don’t have that, it’s quite unpredictable as to what some of these agents can do because they’re learning so quickly. If you don’t architect [agentic infrastructure in this way], the agents can thrive along with your risk…your cost gets out of control and you have no idea [which agent] has done what”, he says.

Naren adds that many organisations continue to struggle with maintaining accurate inventories of APIs and microservices, leaving them potentially exposed to cyber attackers. These risks, he says, are amplified when agents are allowed to proliferate across organisations without the right controls.

“We need to make sure that we bring in those governance controls and be extra careful to ensure that governance and observability are built in from the get-go to manage the scale and risk aspect of [AI agents].”

Intelligence by subscription, AI consciousness

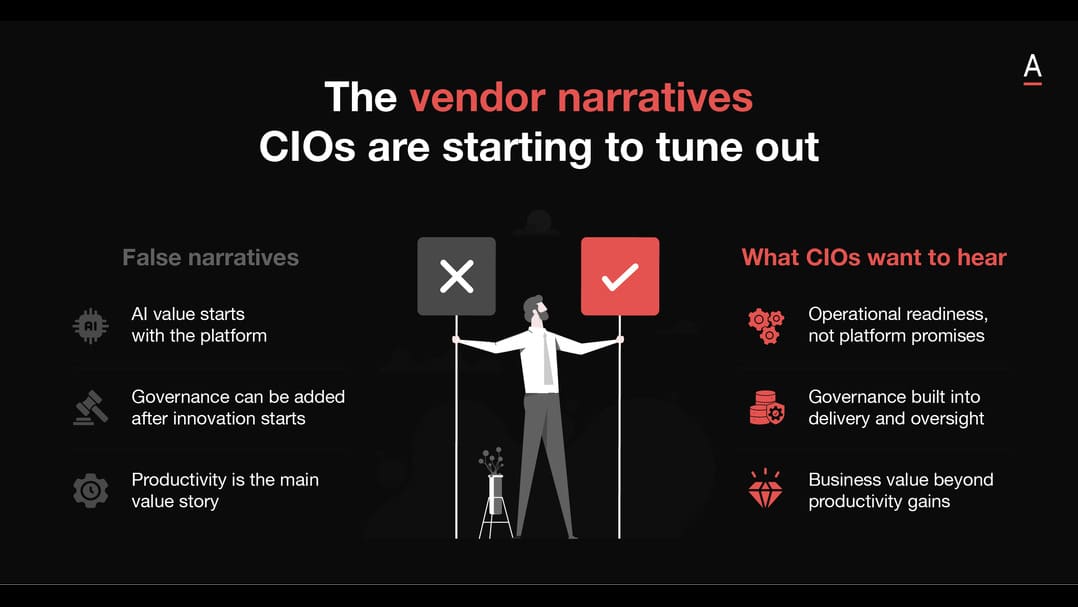

Naren is sceptical about the CEO narrative framing AI intelligence as a subscription service or suggesting that AI models may become conscious.

He argues the tech market has created hype and a narrative that puts unnecessary pressure on organisations to buy these solutions.

“This is an adoption tactic that [vendors] use. I’ve seen this happen with CRMs, with cloud, with every new product that comes out in the market. Organisations have already invested huge amounts of money in their core systems; they’re not going to move away from them. They’ve invested hundreds of millions of dollars.

“So how do you shake that up? By creating this consistent narrative. There are enough people with enough intelligence around the world with public representation as well as within governments, that are closely watching this.”

He says governments should play an active role in ensuring organisations, countries and their citizens are “not impacted by such narratives” or pressured into hasty decisions.

“AI achieving consciousness? I don’t see it in my career…it’s human beings that train it [AI].”