Boards are asking the wrong AI questions, says Sovereign Australia AI’s CEO

In this ADAPT Insider episode, leading AI expert Simon Kriss explores the trust, governance, cost, and sovereignty issues that keep many Australian organisations stuck between pilot activity and real AI scale.Pilot mode persists when three groups lose confidence at the same time: the CFO cannot justify the return, the Chief Risk Officer cannot map the risk, and the board cannot govern what it does not understand.

Simon Kriss uses that breakdown to explain why AI scale in Australia is being held back less by tooling than by trust, governance maturity, and cost discipline.

Listen to the full episode on Apple Podcasts and Spotify.

Key takeaways:

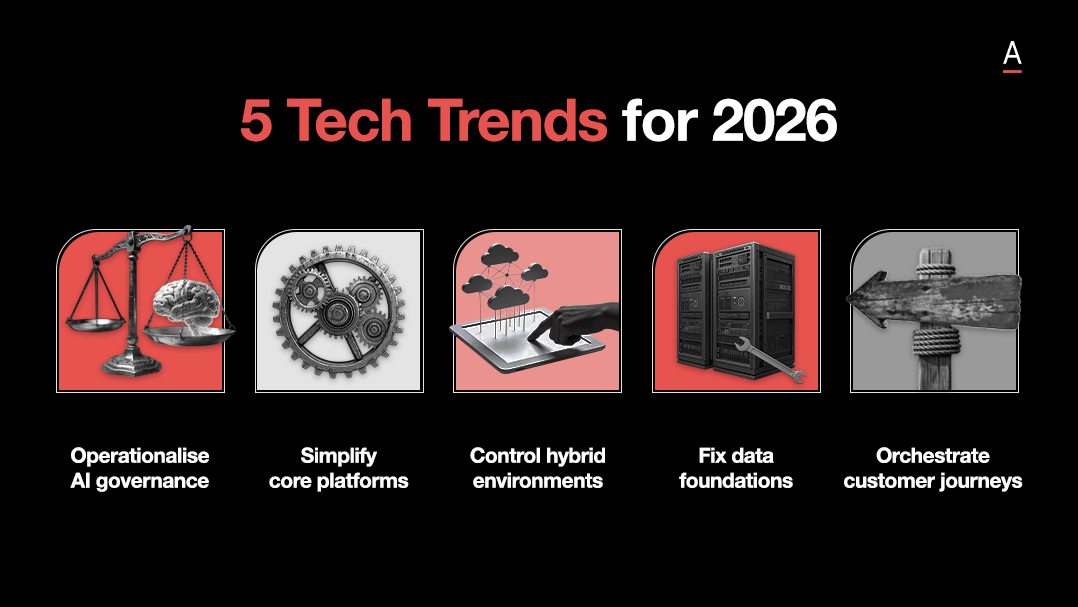

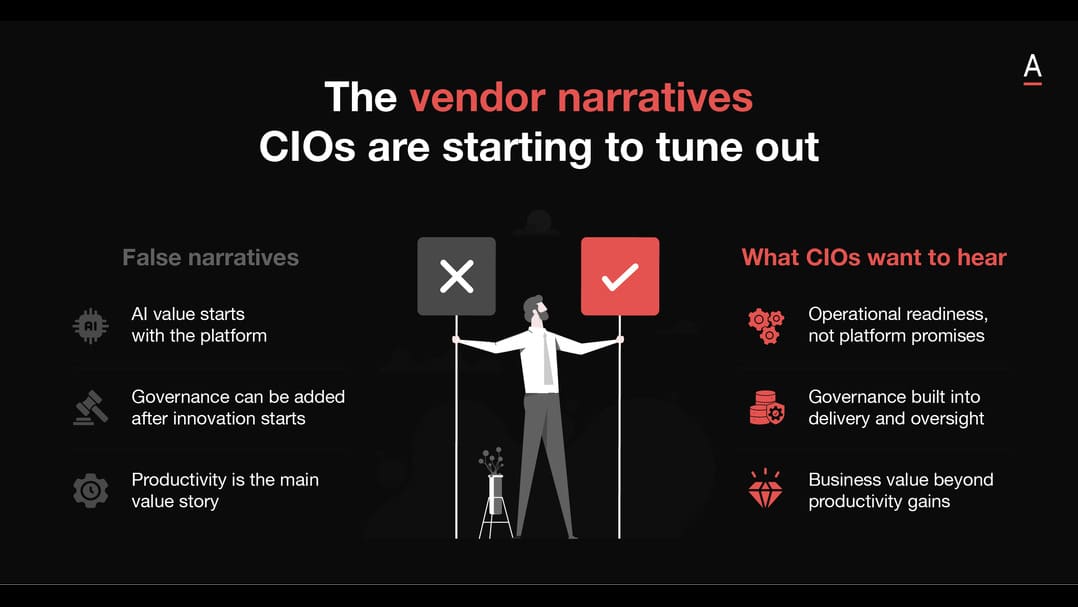

- AI fails when treated as a plug‑in: Governance, risk and operating models must change with the technology.

- Token cost is the new cloud bill shock: AI economics demand active financial controls long before scale.

- Selective sovereignty creates advantage: Cultural alignment reduces cost, increases trust and keeps value local.

Trust collapses when AI is forced into old business models

Most organisations do not stall because the technology is missing.

They stall because finance, risk, and governance are still trying to judge AI through models built for more predictable systems.

Simon says corporate Australia has been “very, very, very slow to adopt” because “we simply don’t” trust AI, even though “we know the tech” and “we get it.”

He then points to two practical blockers that show up when organisations try to move from proof of concept to production: the CFO cannot see where the ROI comes from, and the Chief Risk Officer struggles to apply the same risk logic to very different use cases, like summarising phone calls versus helping synthesise a human drug.

His conclusion is direct: organisations “can’t just shoehorn AI into an existing business model” because the business model, the risk model, and the governance model all need to change with the technology.

AI economics replace cloud economics as the next leadership shock

The cost problem changes shape once AI moves beyond contained pilots and into agent driven work.

Simon separates simple exploitation use cases, where the ROI calculation looks like any other business case, from exploratory and agentic use cases, where the cost profile becomes much harder to predict.

He says the issue intensifies with agents because they do not complete a task once, they iterate, backtrack, and retry, sometimes “20 or 30 times,” which can blow out token consumption rapidly.

In his example, something that started at “$28 per month for five people in proof of concept” can turn into a “half $1 million bill” once lag billing catches up.

He is also clear that the market is still “figuring it out,” so leaders cannot assume AI cost controls are already mature in the same way cloud FinOps became.

Sovereignty shifts from risk control to economic advantage

Sovereignty becomes more valuable when leaders stop treating it as a narrow hosting question and start treating it as a control and value capture question across inference, models, and cultural fit.

Simon says sovereignty is “about protectionism more than isolationism” and argues that organisations still mostly see it as a way of controlling risk, even though it is starting to become “an economic enabler.”

He points to dependence on offshore inference, the US Cloud Act, limited control over how foreign foundation models are built, and the fact that token spend continues to flow offshore.

He also gives a more operational argument for local relevance: a chatbot that better understands Australian language and nuance will use fewer tokens and create better customer loyalty because it reaches understanding faster.

In his view, that makes selective sovereignty a practical commercial decision as much as a governance one.