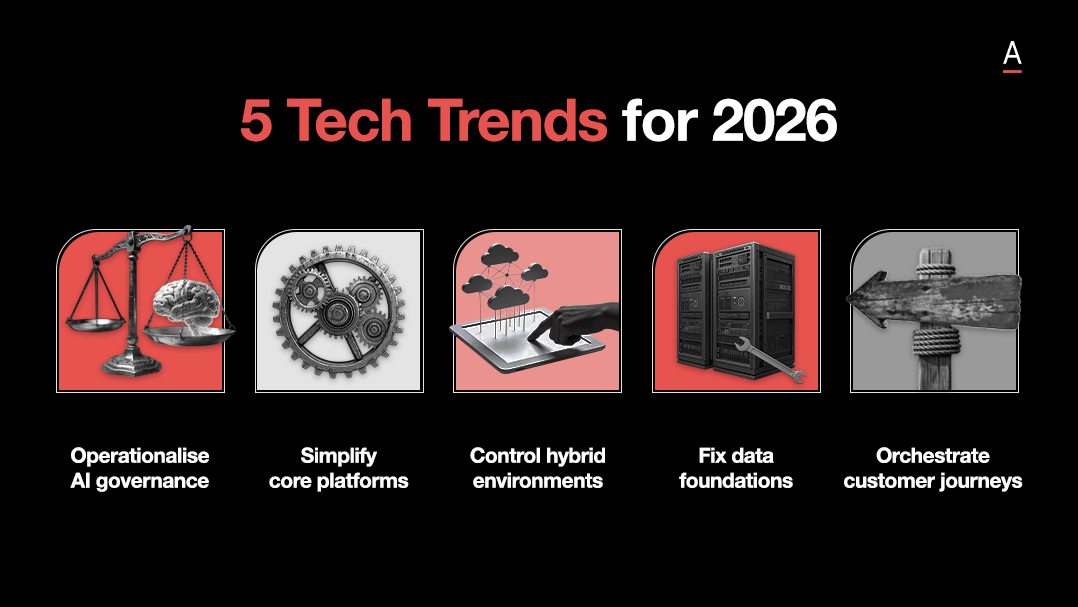

AI ambition is running well ahead of execution. While investment is rising and most enterprises can point to at least one live use case, far fewer can show repeatable value across the business.

In ADAPT’s survey of 200 Australian CIOs, 70% planned to increase investment in generative AI, yet only 25% had automated workflows in place. Just 13% considered their AI efforts successful.

For Alan, that gap is not mainly a technology problem.

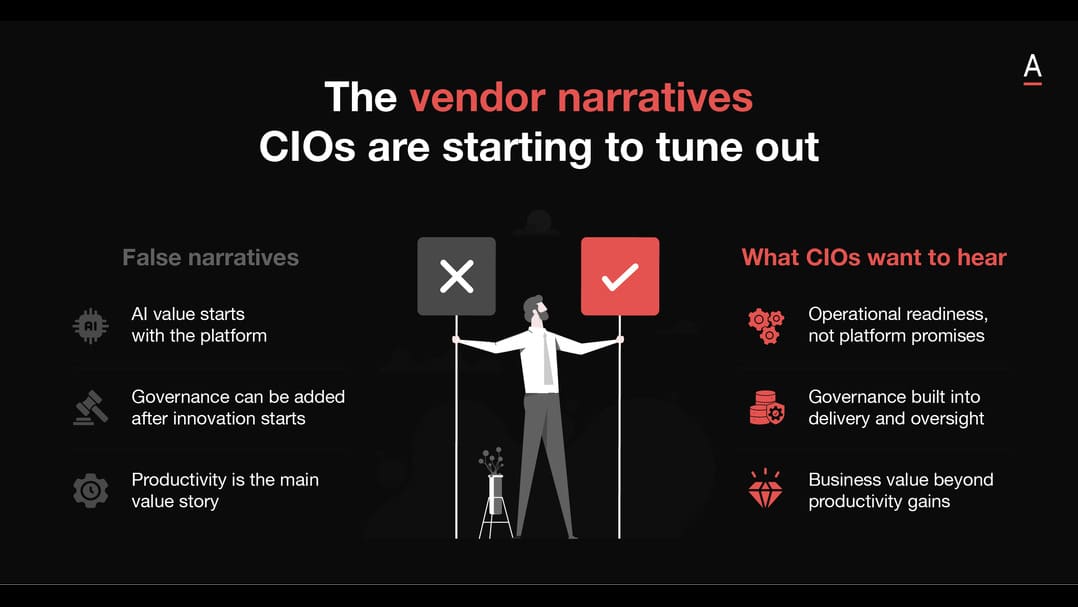

It reflects weaker alignment between AI programs, business outcomes, and the disciplines needed to make adoption stick.

Business alignment is what gives AI a reason to scale

AI programs gain momentum when they are attached to a clear commercial or operational problem.

Without that anchor, organisations end up with experimentation that looks active but struggles to convert into repeatable value.

That is the pattern Alan sees in his conversations with executives, and it lines up closely with ADAPT’s data.

Many organisations have one visible use case in market, but far fewer can show value across ten or more.

His view is that the strongest programs begin with a defined problem, whether that sits in procurement, financial analysis, or another practical workflow, then build from there.

The real differentiator is whether the initiative is connected to a real business need and a measurable result.

Education turns AI access into usable capability

Giving people access to AI does not guarantee better work.

Teams still need the confidence, context, and practical understanding to apply it well inside real workflows.

Alan compares the challenge to early dashboard adoption.

Dashboards only became valuable when organisations taught people how to use them meaningfully, and he argues that AI follows the same pattern.

Prompt access on its own is not enough.

The organisations making stronger progress are building broader business fluency, helping teams understand where AI fits, how it should be used, and what good usage looks like in practice.

That matters because adoption slows quickly when employees are left with a tool but no structure around how to turn it into impact.

ROI discipline decides whether AI earns long term support

The first AI projects are often the easiest to justify.

The harder task comes later, when leaders need to show that value is being sustained, expanded, and translated into outcomes the business actually cares about.

That pressure is becoming harder to ignore.

In ADAPT’s data, 50% of CIOs struggle to measure ongoing AI benefits, while 40% of CFOs remain sceptical about whether technology investments are delivering the value promised.

Alan’s point is that successful organisations treat validation as part of the program from the start.

They track impact, stay close to the business outcome, and avoid mistaking activity for return.

That discipline becomes critical when AI moves beyond early pilots and into broader investment decisions.

Organisations move further when they align programs to business priorities, teach people how to use the tools well, and keep proving the value as adoption grows.