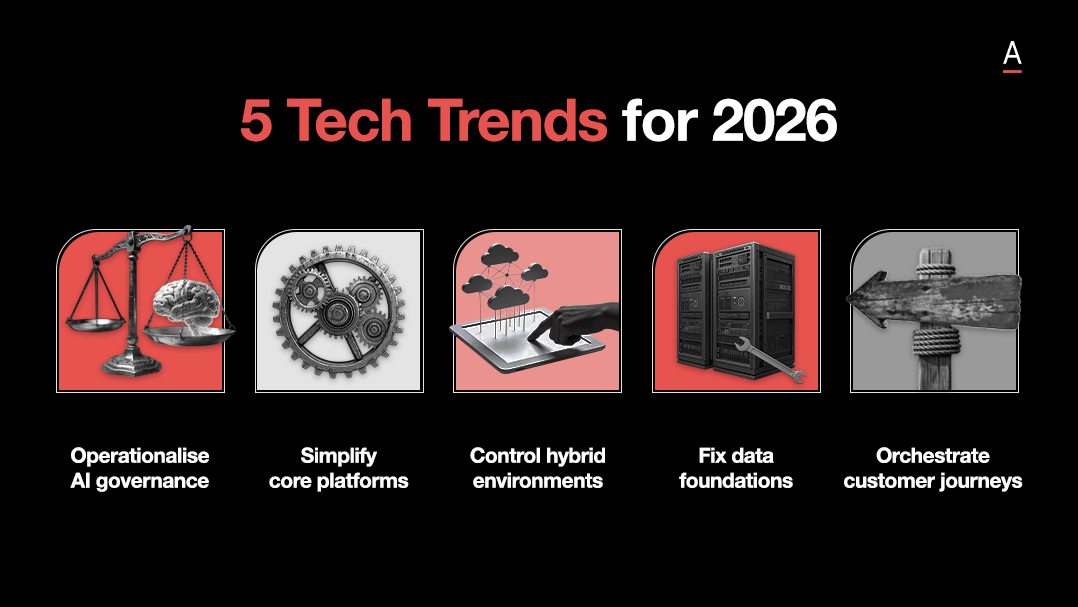

The weakest AI strategies still treat the technology like a bolt on productivity tool.

For Dawid Naude, the point of AI is not to make the current business run a little faster and a little cheaper.

It is to use the time, intelligence, and optionality AI creates to open new markets, build new products, and rethink how the business competes in the first place.

That is a much higher bar, and it changes where leaders should start.

Key takeaways:

- AI capability improves when leaders use the tools themselves instead of outsourcing their understanding.

- Most companies are still treating AI as an efficiency play when the bigger opportunity is business model change, new products, and new markets.

- The fastest way to test value is to start with general AI tools, prove a use case cheaply, then invest in narrower applications where higher accuracy matters.

Leaders need first hand AI fluency

Leadership teams make poor AI decisions when their understanding is second hand.

They need direct experience with the tools before they can judge where the technology fits, what it can do well, and where it still falls short.

That is why Dawid has changed his own approach. Instead of beginning with use case workshops and recommendation decks, he now says the starting point should be the leadership team using a general assistant in the context of their own role.

His example is practical: put the app on your phone, use voice mode, explain your role, and ask it to teach you.

It is about building better judgement through direct use.

Efficiency alone is too small a goal

A lot of AI activity still focuses on doing current work faster and cheaper.

Dawid argues that this misses the larger opportunity.

His benchmark is simple.

If AI gives people substantial time back, that time should be redirected into new products, new markets, and new experiments.

He is especially blunt about professional services, where he sees AI putting existing business models under pressure.

In those sectors, he argues that competing on expertise alone becomes harder, which pushes leaders towards reinvention rather than marginal efficiency gains.

He is equally dismissive of AI theatre.

Meeting summaries that nobody reads and call centre automation that ignores the root cause of customer demand do not prove much. They create activity, not value.

Start broad, prove value, then go narrow

AI does not need to be scaled the same way as older enterprise software. Some use cases are best tested with broad, general assistants before any money is spent on a narrower application.

That is how Dawid frames general versus narrow AI.

A general tool gives leaders and teams a cheap way to test whether the technology is directionally useful.

If it can already perform well enough to show promise, that is a signal to invest further.

His olive health example makes the point: if a general model can get early predictions roughly right from a simple photo, there may be enough value in the use case to justify the work needed to push accuracy much higher.

That same logic shapes how he talks about ROI.

Leaders should define the metric they want to move or the risk they want to reduce, nominate internal champions, run lean experiments, and come back with evidence tied to real outcomes.

Cheap tests that show a measurable shift are more useful than broad claims about transformation.